Italian — Full Ablation Study & Research Report

Detailed evaluation of all model variants trained on Italian Wikipedia data by Wikilangs.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

1. Tokenizer Evaluation

Results

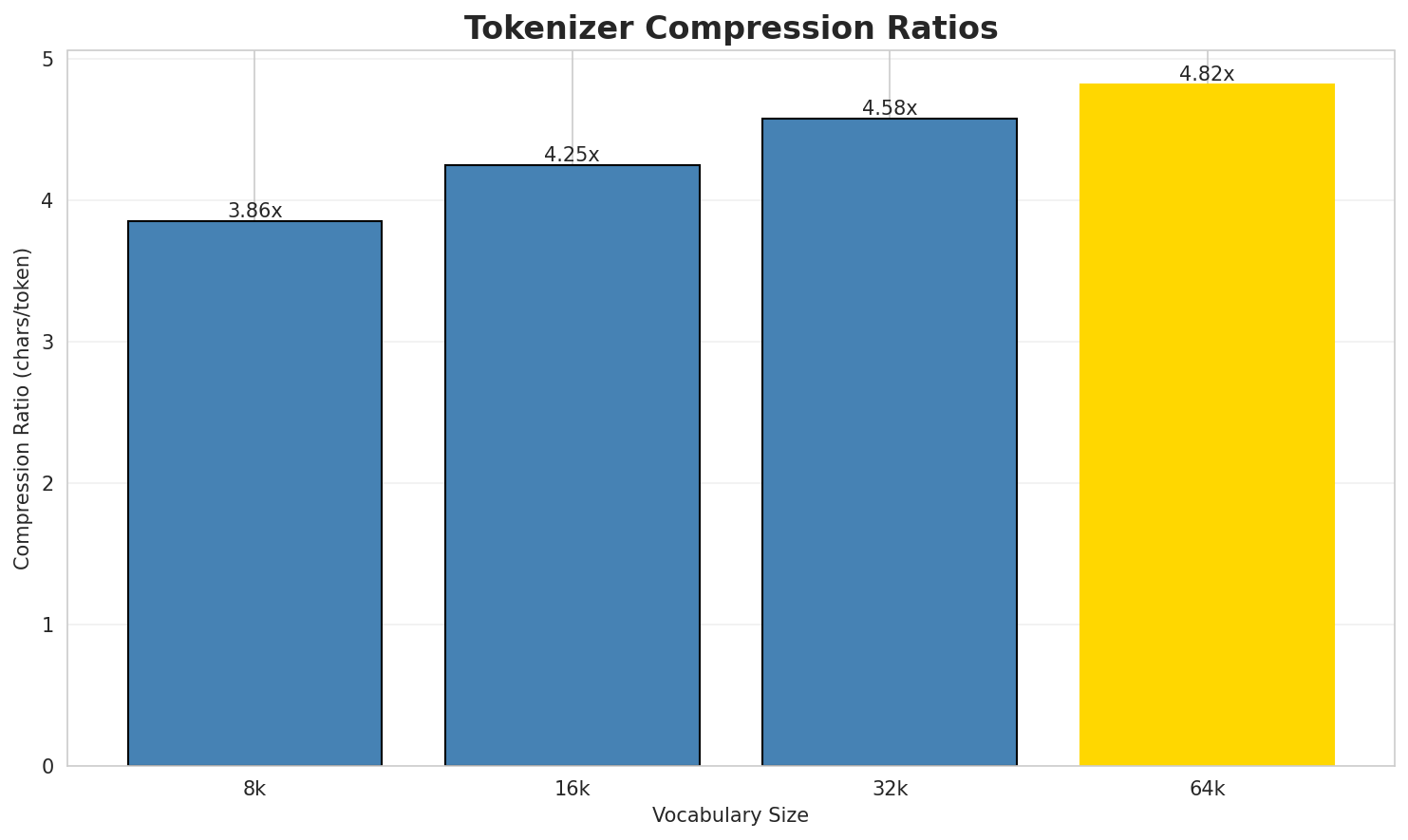

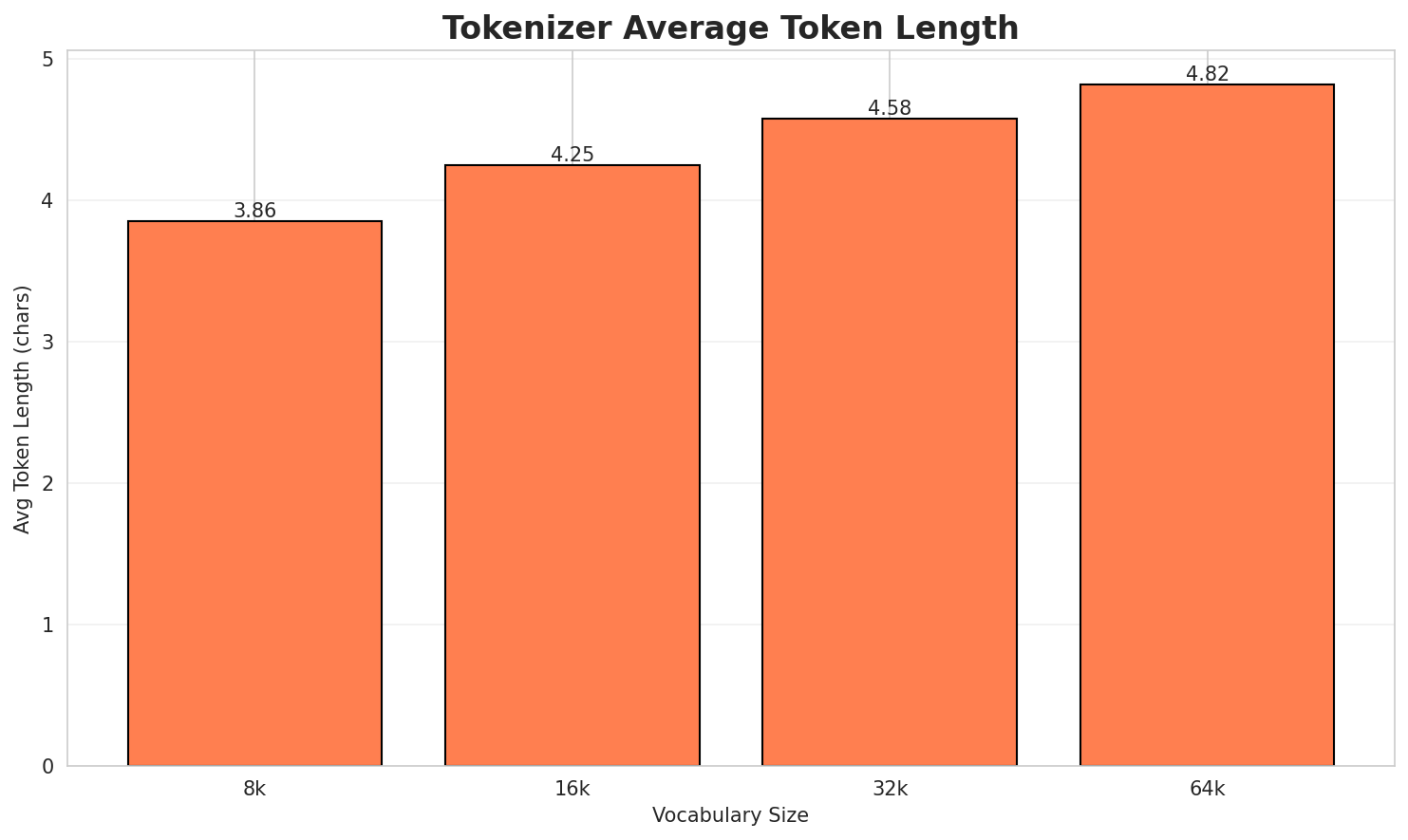

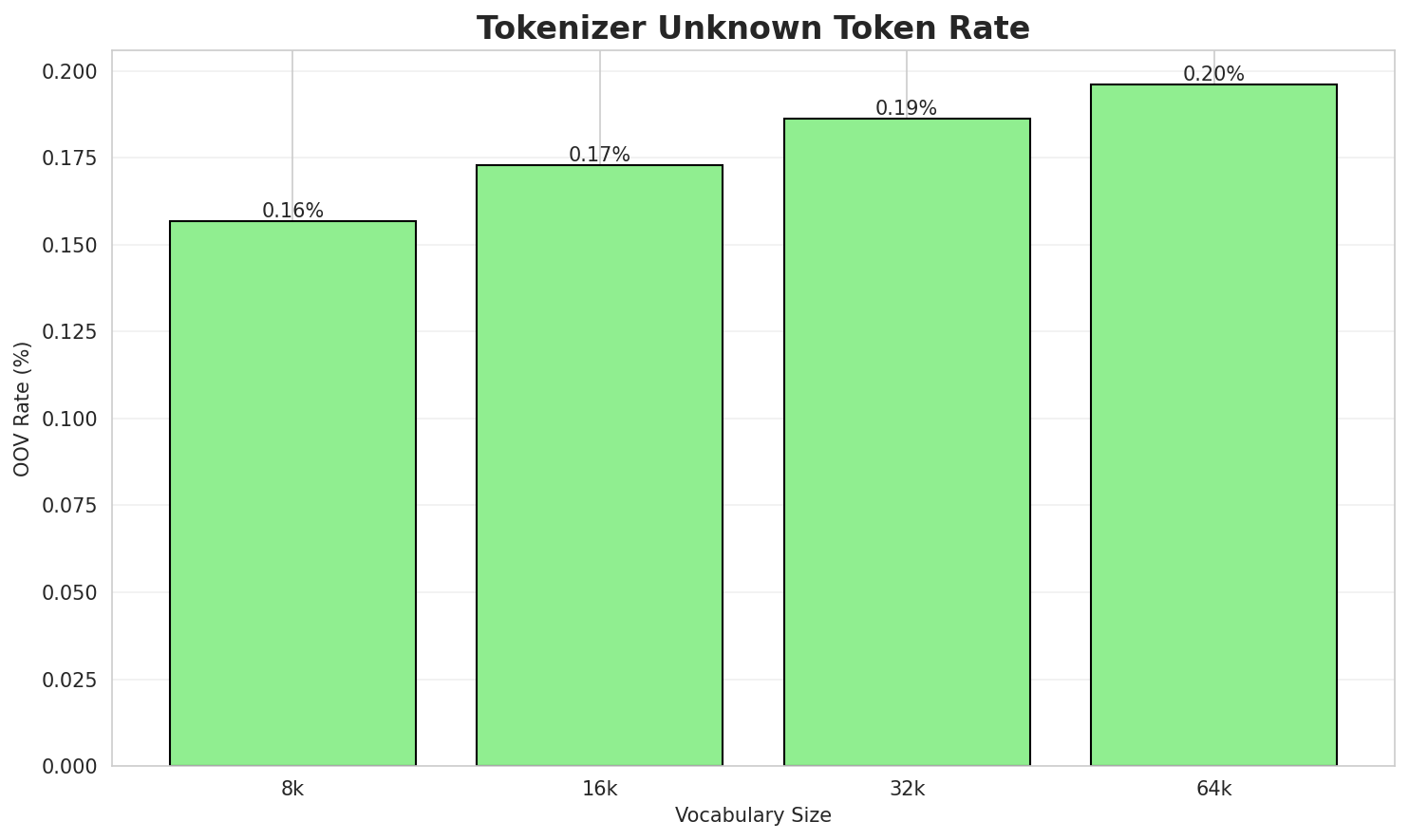

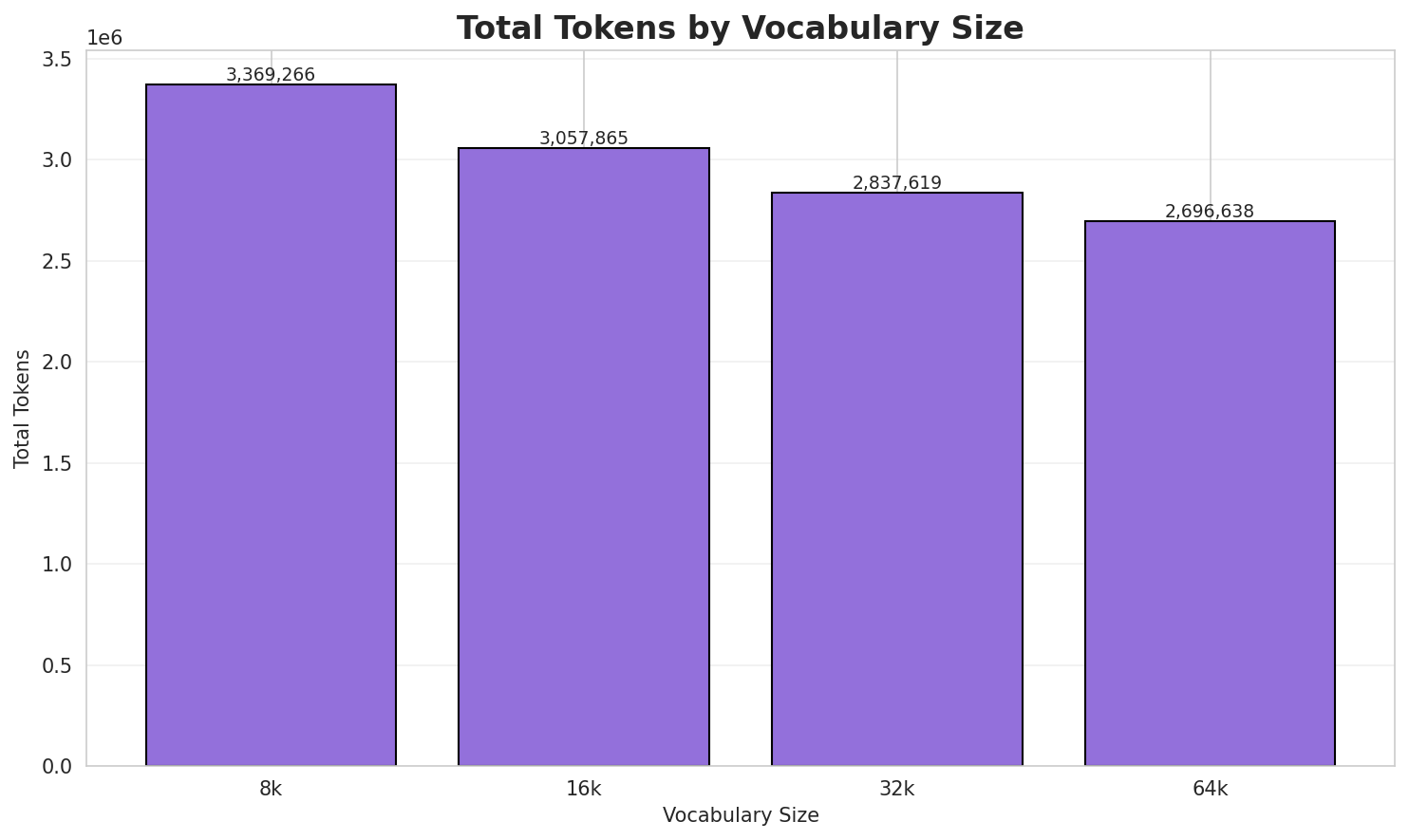

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.856x | 3.86 | 0.1569% | 3,369,266 |

| 16k | 4.248x | 4.25 | 0.1729% | 3,057,865 |

| 32k | 4.578x | 4.58 | 0.1863% | 2,837,619 |

| 64k | 4.817x 🏆 | 4.82 | 0.1960% | 2,696,638 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: Eventi, invenzioni e scoperte Viene inventato il Lapis Benjamin Franklin inventa...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁viene ▁inv entato ▁il ▁la ... (+29 more) |

39 |

| 16k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁viene ▁inventato ▁il ▁la pis ... (+21 more) |

31 |

| 32k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁viene ▁inventato ▁il ▁la pis ... (+17 more) |

27 |

| 64k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁viene ▁inventato ▁il ▁la pis ... (+17 more) |

27 |

Sample 2: Eventi, invenzioni e scoperte Roma - Inaugurazione del Colosseo Personaggi 81 Ro...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁roma ▁- ▁inaugu razione ▁del ... (+19 more) |

29 |

| 16k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁roma ▁- ▁inaugu razione ▁del ... (+19 more) |

29 |

| 32k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁roma ▁- ▁inaugurazione ▁del ▁colo ... (+18 more) |

28 |

| 64k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁roma ▁- ▁inaugurazione ▁del ▁colosseo ... (+16 more) |

26 |

Sample 3: Eventi, invenzioni e scoperte Fine della cattività avignonese A Vicenza venne sp...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁fine ▁della ▁ca tti vità ... (+23 more) |

33 |

| 16k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁fine ▁della ▁ca ttività ▁avi ... (+22 more) |

32 |

| 32k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁fine ▁della ▁ca ttività ▁avi ... (+22 more) |

32 |

| 64k | ▁eventi , ▁invenzioni ▁e ▁scoperte ▁fine ▁della ▁cattività ▁avignon ese ... (+18 more) |

28 |

Key Findings

- Best Compression: 64k achieves 4.817x compression

- Lowest UNK Rate: 8k with 0.1569% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

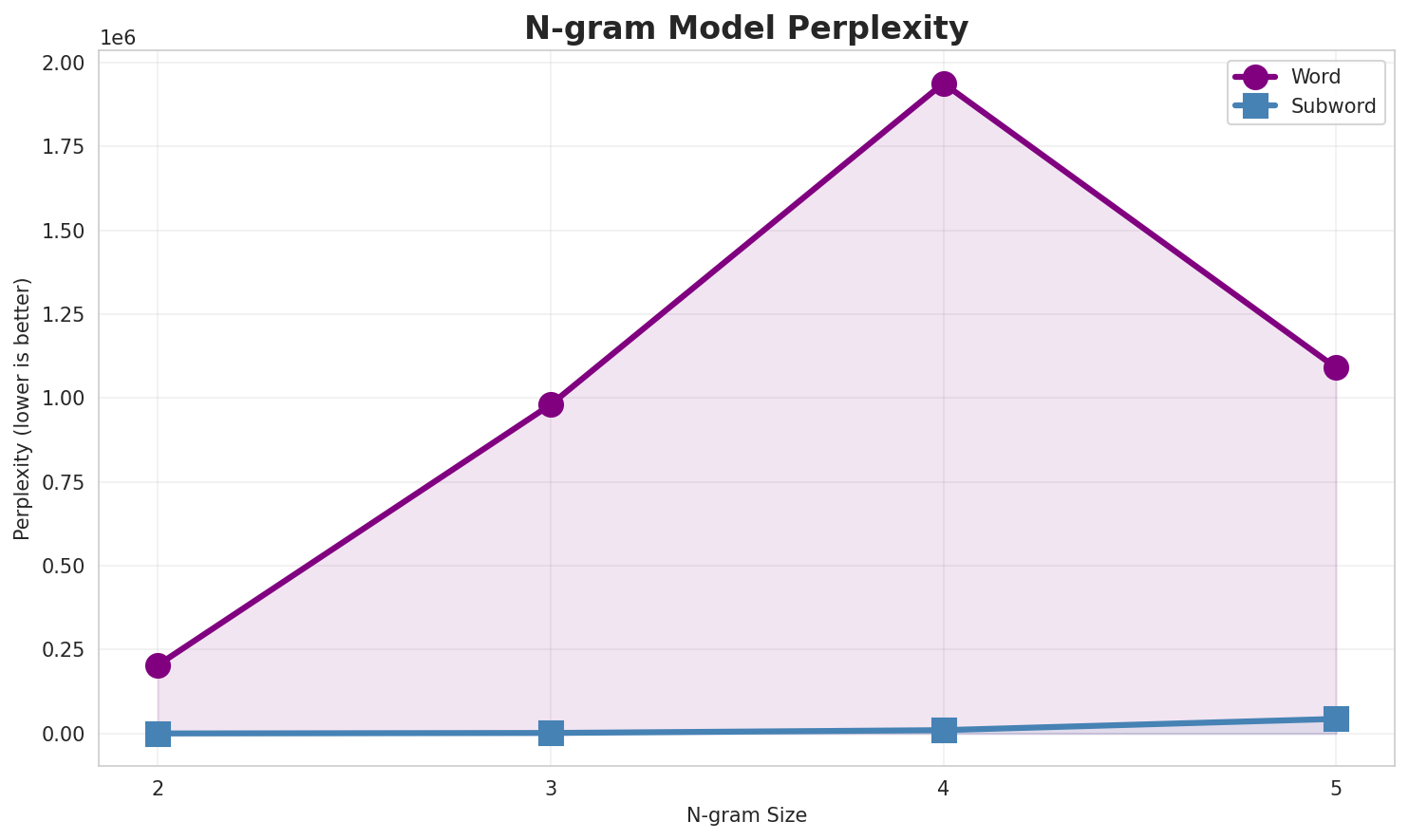

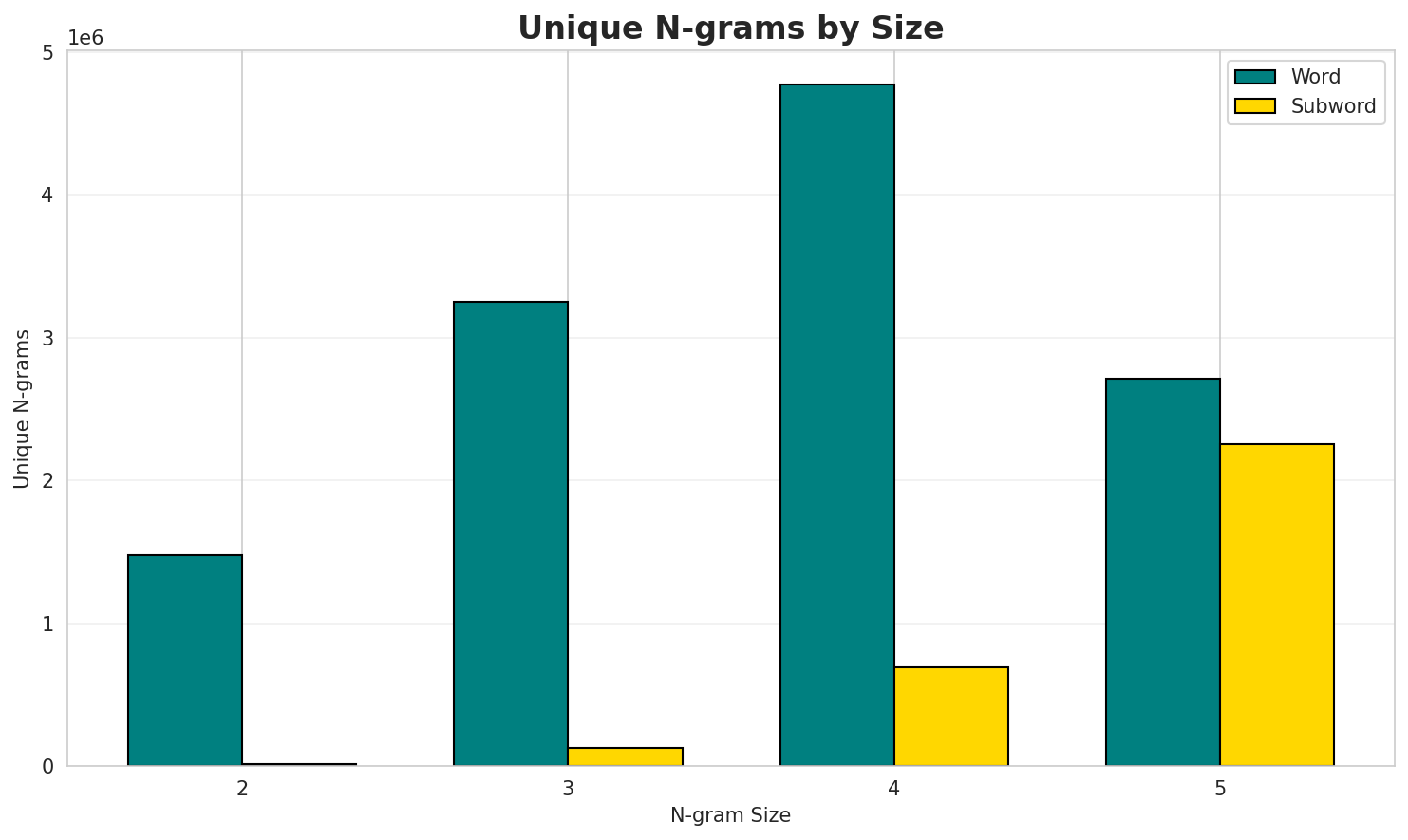

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 204,245 | 17.64 | 1,475,040 | 6.1% | 16.8% |

| 2-gram | Subword | 214 🏆 | 7.74 | 16,385 | 74.4% | 99.4% |

| 3-gram | Word | 980,193 | 19.90 | 3,253,104 | 3.2% | 7.9% |

| 3-gram | Subword | 1,722 | 10.75 | 125,759 | 29.1% | 78.9% |

| 4-gram | Word | 1,937,953 | 20.89 | 4,769,877 | 3.5% | 7.0% |

| 4-gram | Subword | 10,064 | 13.30 | 693,084 | 14.1% | 42.3% |

| 5-gram | Word | 1,090,157 | 20.06 | 2,714,110 | 5.0% | 9.7% |

| 5-gram | Subword | 43,596 | 15.41 | 2,255,172 | 7.7% | 24.4% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | per la |

97,286 |

| 2 | è un |

96,027 |

| 3 | di un |

94,634 |

| 4 | e il |

87,633 |

| 5 | altri progetti |

84,533 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | altri progetti collegamenti |

60,396 |

| 2 | progetti collegamenti esterni |

60,395 |

| 3 | è un comune |

49,422 |

| 4 | note altri progetti |

43,464 |

| 5 | società evoluzione demografica |

42,434 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | altri progetti collegamenti esterni |

60,395 |

| 2 | è un comune francese |

33,569 |

| 3 | abitanti situato nel dipartimento |

33,506 |

| 4 | un comune francese di |

33,266 |

| 5 | società evoluzione demografica note |

32,814 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | è un comune francese di |

33,067 |

| 2 | evoluzione demografica note altri progetti |

32,558 |

| 3 | società evoluzione demografica note altri |

32,545 |

| 4 | note altri progetti collegamenti esterni |

27,862 |

| 5 | demografica note altri progetti collegamenti |

18,549 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | e _ |

14,124,940 |

| 2 | a _ |

13,040,624 |

| 3 | i _ |

10,977,304 |

| 4 | o _ |

10,608,684 |

| 5 | _ d |

9,830,426 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d i |

3,986,152 |

| 2 | _ d e |

3,607,817 |

| 3 | l a _ |

3,337,560 |

| 4 | d i _ |

3,263,476 |

| 5 | _ c o |

3,099,394 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d i _ |

3,072,491 |

| 2 | _ d e l |

2,506,551 |

| 3 | l l a _ |

1,668,082 |

| 4 | _ i l _ |

1,519,530 |

| 5 | d e l l |

1,488,470 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d e l l |

1,459,429 |

| 2 | e l l a _ |

1,154,387 |

| 3 | i o n e _ |

1,144,610 |

| 4 | _ d e l _ |

1,020,510 |

| 5 | z i o n e |

936,001 |

Key Findings

- Best Perplexity: 2-gram (subword) with 214

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~24% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

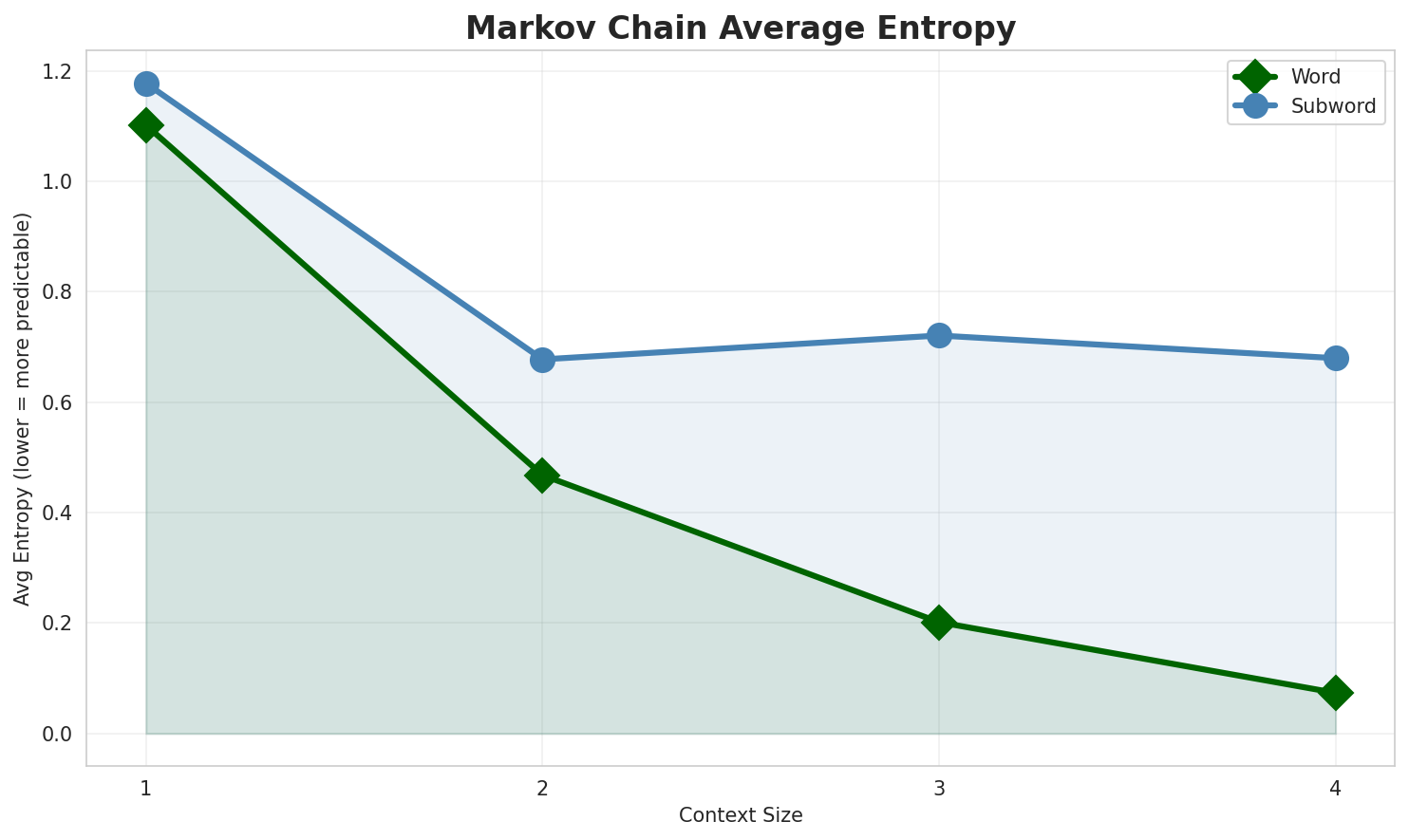

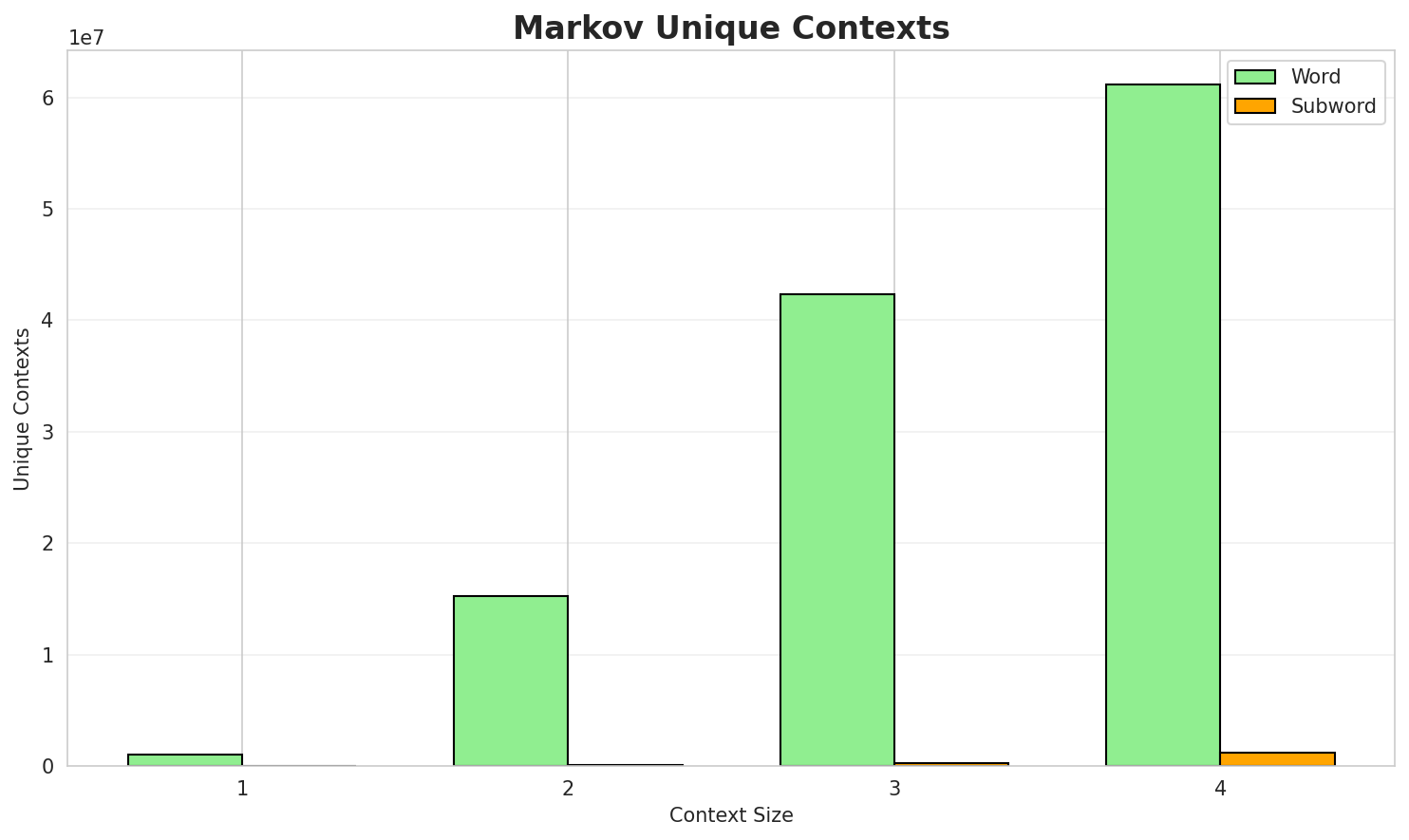

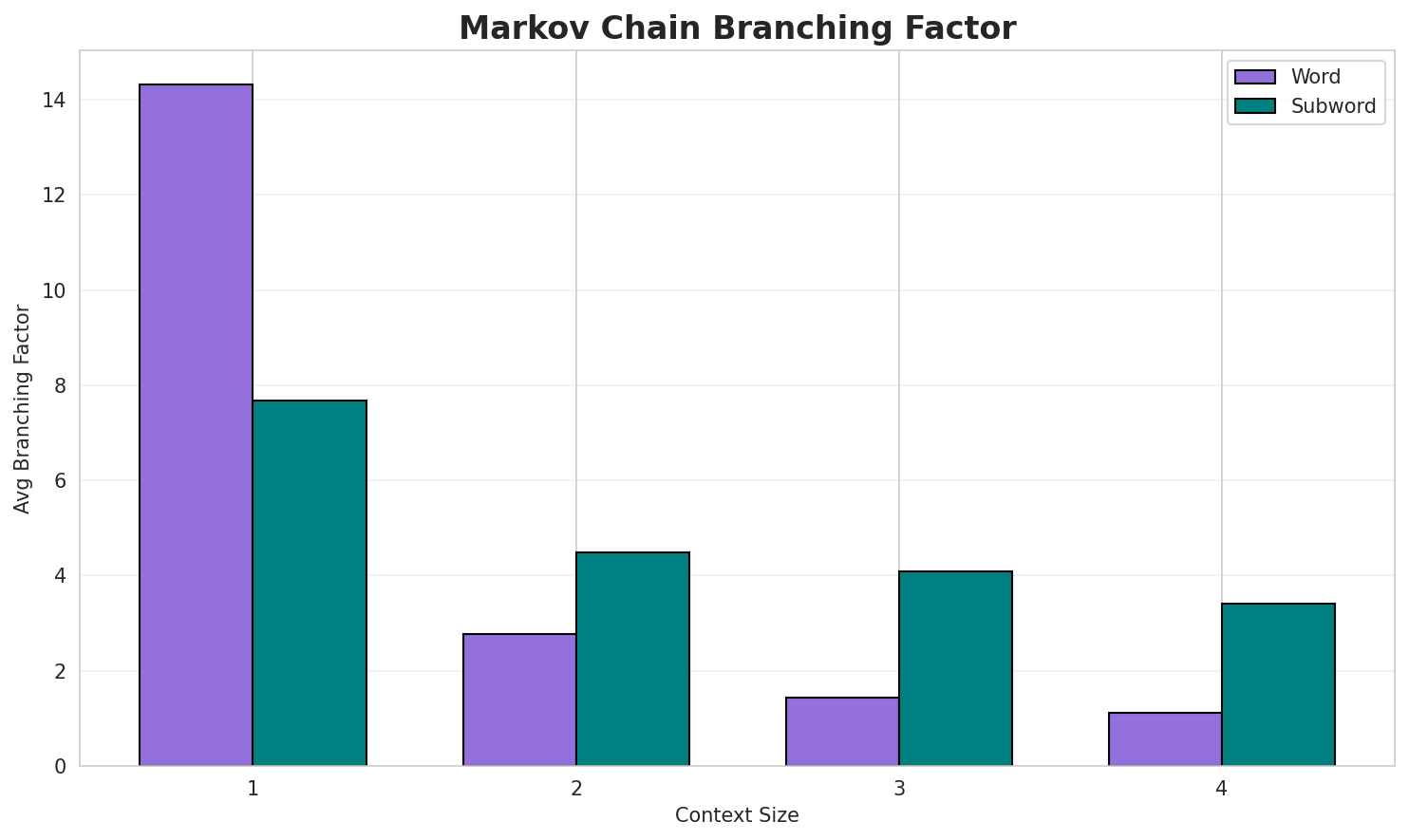

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 1.1017 | 2.146 | 14.31 | 1,066,977 | 0.0% |

| 1 | Subword | 1.1777 | 2.262 | 7.67 | 8,745 | 0.0% |

| 2 | Word | 0.4680 | 1.383 | 2.78 | 15,250,485 | 53.2% |

| 2 | Subword | 0.6777 | 1.600 | 4.48 | 67,098 | 32.2% |

| 3 | Word | 0.2017 | 1.150 | 1.44 | 42,356,757 | 79.8% |

| 3 | Subword | 0.7209 | 1.648 | 4.10 | 300,612 | 27.9% |

| 4 | Word | 0.0737 🏆 | 1.052 | 1.12 | 61,123,669 | 92.6% |

| 4 | Subword | 0.6799 | 1.602 | 3.41 | 1,231,483 | 32.0% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

di assessore regionale del manto di pilotaggio e la frase di classificazione i dimostranti scendono ...e della borgogna franca contea società evoluzione demografica note book apogeo del jkd è inoltre lil numero di sacco viene riportato che all inizio con le descrizioni matematicamente da cui le

Context Size 2:

per la stirpe più distante e poi per varie statue a delfi in grecia per la primaè un genere teatrale di rendere il suo simbolo è appunto quello di stern gerlach numero quanticodi un giovane nero floyd patterson mettendolo anche in alcuni casi come trimble contro gordon brown ...

Context Size 3:

altri progetti collegamenti esterni white teeth a conversation with cary grant che lo portò in testa...è un comune francese di abitanti situato nel dipartimento della valle della politica di per il migli...progetti collegamenti esterni t dell oceano pacifico meridionale polinesia con una superficie per co...

Context Size 4:

altri progetti collegamenti esterni topo gigio all ed sullivan show di elvis presley scatenò i teena...è un comune francese di 75 abitanti situato nella comunità autonoma della navarra altri progetti del...abitanti situato nel dipartimento dell eure nella regione della normandia società evoluzione demogra...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_ri_fi_ami_l'agii_po_mpr_pri_mpaeo_ia,_ltavinafa

Context Size 2:

e_diatuas,_of_spea_inasa_quo_ca_ari_nal_re_e_ne_pie

Context Size 3:

_di_anni_di_gioria_della_galle._malila_perfalcune_pelt

Context Size 4:

_di_fontempi_livini_del_romanzo_è_anchlla_classi_e_l'inse

Key Findings

- Best Predictability: Context-4 (word) with 92.6% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (1,231,483 contexts)

- Recommendation: Context-3 or Context-4 for text generation

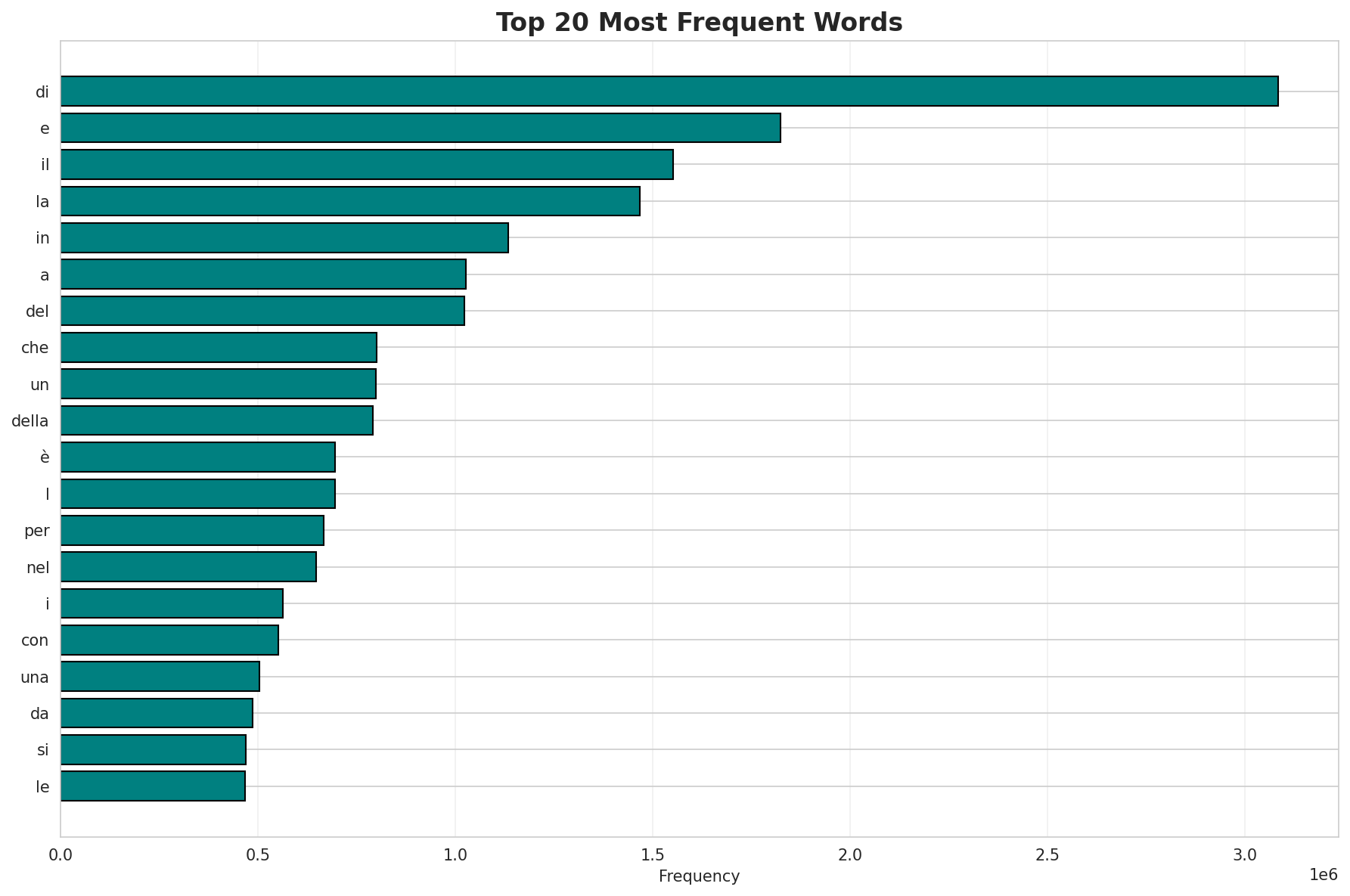

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 511,837 |

| Total Tokens | 75,575,358 |

| Mean Frequency | 147.66 |

| Median Frequency | 4 |

| Frequency Std Dev | 7438.35 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | di | 3,083,677 |

| 2 | e | 1,824,641 |

| 3 | il | 1,551,962 |

| 4 | la | 1,467,509 |

| 5 | in | 1,133,909 |

| 6 | a | 1,025,836 |

| 7 | del | 1,022,058 |

| 8 | che | 801,243 |

| 9 | un | 799,178 |

| 10 | della | 790,420 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | strensall | 2 |

| 2 | towthorpe | 2 |

| 3 | flamininus | 2 |

| 4 | etolici | 2 |

| 5 | riveros | 2 |

| 6 | kuntur | 2 |

| 7 | wachana | 2 |

| 8 | queñua | 2 |

| 9 | karabotas | 2 |

| 10 | tveitite | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.0088 |

| R² (Goodness of Fit) | 0.996765 |

| Adherence Quality | excellent |

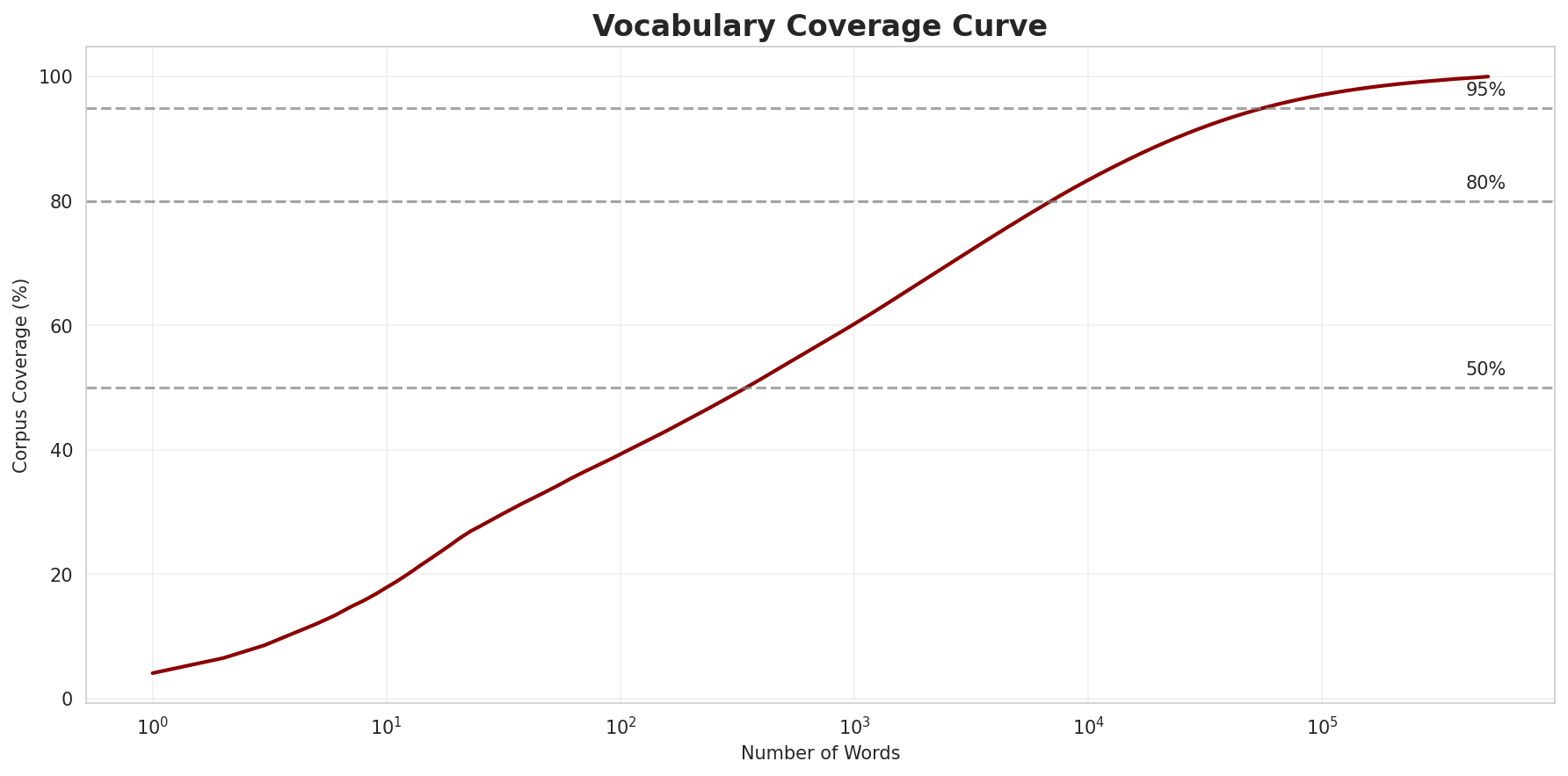

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 39.3% |

| Top 1,000 | 60.2% |

| Top 5,000 | 76.8% |

| Top 10,000 | 83.4% |

Key Findings

- Zipf Compliance: R²=0.9968 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 39.3% of corpus

- Long Tail: 501,837 words needed for remaining 16.6% coverage

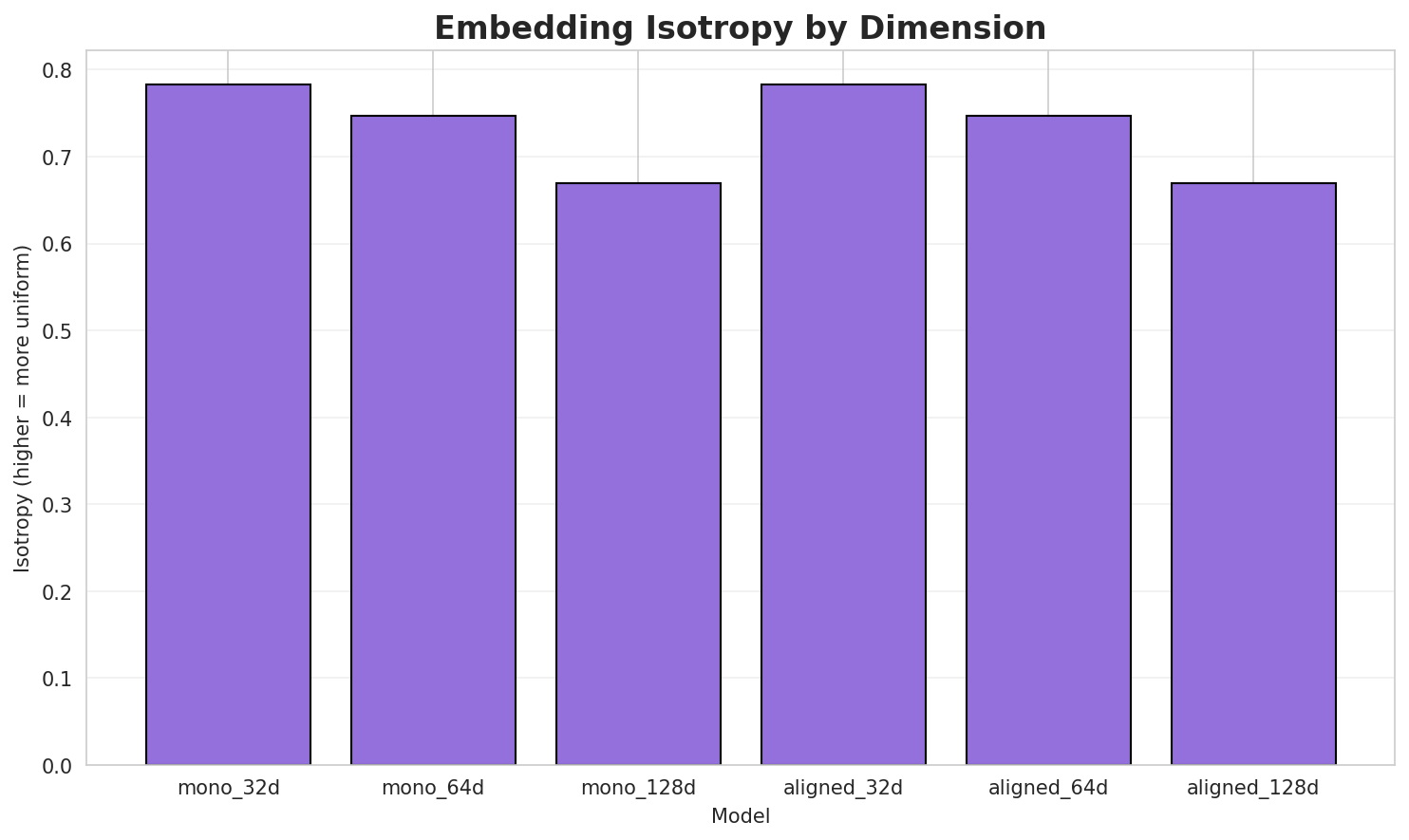

5. Word Embeddings Evaluation

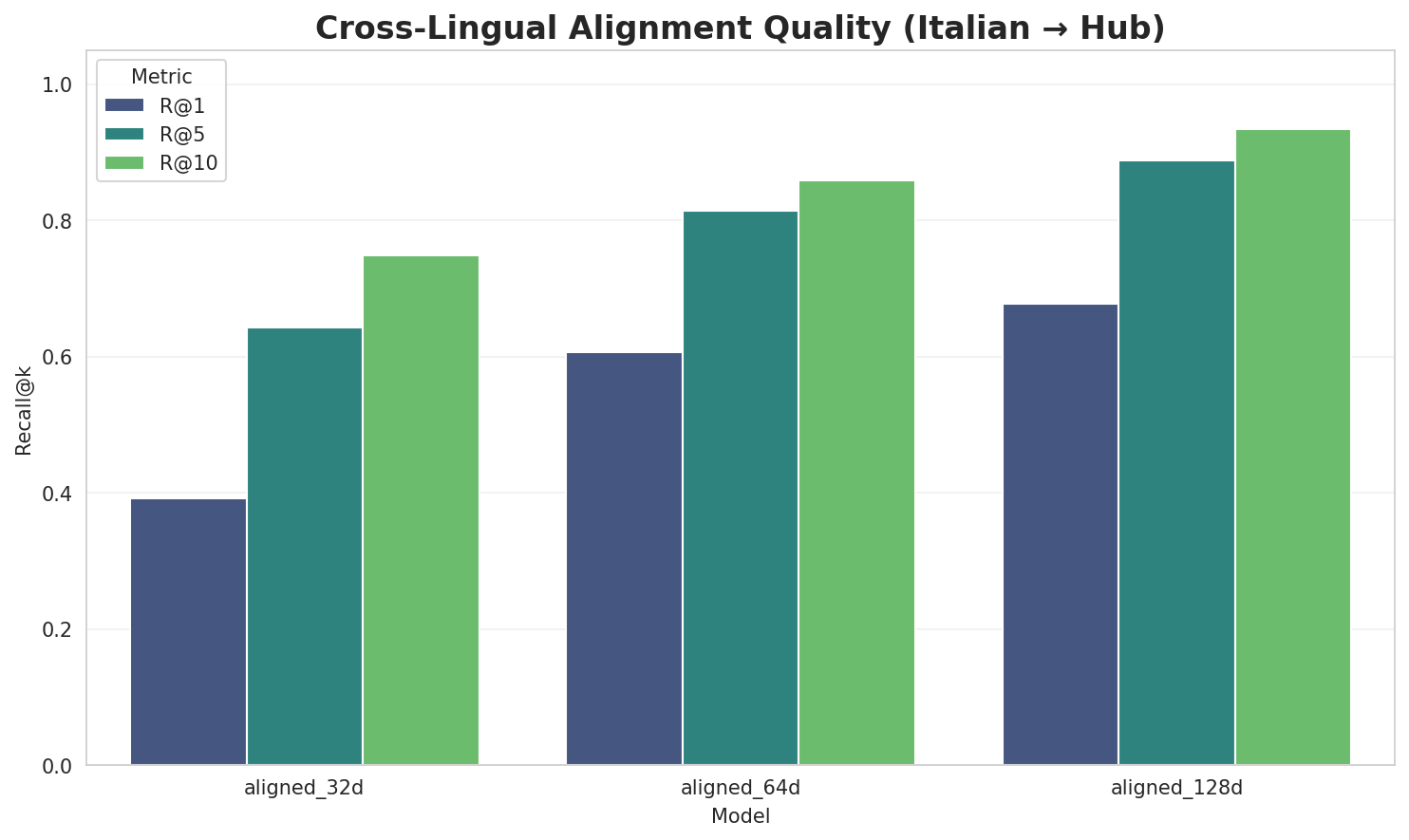

5.1 Cross-Lingual Alignment

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.7834 | 0.3810 | N/A | N/A |

| mono_64d | 64 | 0.7465 | 0.3030 | N/A | N/A |

| mono_128d | 128 | 0.6690 | 0.2585 | N/A | N/A |

| aligned_32d | 32 | 0.7834 🏆 | 0.3788 | 0.3920 | 0.7480 |

| aligned_64d | 64 | 0.7465 | 0.3134 | 0.6060 | 0.8580 |

| aligned_128d | 128 | 0.6690 | 0.2626 | 0.6780 | 0.9340 |

Key Findings

- Best Isotropy: aligned_32d with 0.7834 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.3162. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 67.8% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | -0.603 | Low formulaic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-s |

sansavi, sfocianti, santegidiese |

-a |

astrolabes, applicheremo, ancia |

-ma |

madonne, marclay, mastrantuono |

-m |

mistruzzi, medioriente, madonne |

-c |

camminerò, ceraio, coltellacci |

-p |

primaibidem, pohliana, poschiavini |

-b |

brozzo, berlette, bucherer |

-t |

tigerdirect, takahito, tintry |

Productive Suffixes

| Suffix | Examples |

|---|---|

-e |

medioriente, madonne, lasiocampidae |

-o |

takahito, ceraio, brozzo |

-a |

gialloviola, pohliana, dawa |

-i |

mistruzzi, coltellacci, creatrici |

-s |

astrolabes, glîrs, canids |

-no |

mastrantuono, corrispondano, leprino |

-n |

guédelon, esametilen, cupidon |

-te |

medioriente, berlette, supercorazzate |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

rono |

2.58x | 96 contexts | grono, crono, ronon |

nche |

1.63x | 202 contexts | anche, nchev, ponche |

ogra |

1.55x | 226 contexts | fogra, sogra, dogra |

nter |

1.48x | 287 contexts | enter, inter, nterr |

lmen |

1.98x | 62 contexts | almen, ulmen, ilmen |

izza |

1.47x | 191 contexts | vizza, mizza, nizza |

stru |

1.49x | 158 contexts | strub, strup, strum |

ostr |

1.37x | 174 contexts | costr, ostro, nostr |

uest |

1.64x | 65 contexts | quest, fuest, guest |

ntro |

1.50x | 94 contexts | antro, intro, entro |

ontr |

1.42x | 115 contexts | contr, contrò, hontra |

ggio |

1.33x | 157 contexts | iggio, aggio, eggio |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-c |

-o |

141 words | collalto, connettivo |

-c |

-e |

139 words | cappelline, clorotiche |

-s |

-a |

138 words | stäfa, serratissima |

-s |

-e |

136 words | shilke, sommette |

-a |

-e |

132 words | acquaforte, accreditabile |

-s |

-o |

128 words | sperandiofrancesco, sfido |

-c |

-i |

116 words | caseifici, consistenticittadini |

-c |

-a |

114 words | cabarga, carapinheira |

-a |

-o |

111 words | arcagato, ammandorlato |

-a |

-i |

110 words | appaltatrici, angiulli |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| iannacone | ianna-co-ne |

7.5 | co |

| frantumata | frantum-a-ta |

7.5 | a |

| strigosus | strigo-s-us |

7.5 | s |

| roccatani | rocca-ta-ni |

7.5 | ta |

| scoppiato | scoppi-a-to |

7.5 | a |

| archedemo | arched-e-mo |

7.5 | e |

| pontificem | pontific-e-m |

7.5 | e |

| approvarono | approvar-o-no |

7.5 | o |

| millières | milliè-re-s |

7.5 | re |

| cercheremo | cercher-e-mo |

7.5 | e |

| sintaxina | sintax-i-na |

7.5 | i |

| ancorarono | ancora-ro-no |

7.5 | ro |

| contesero | conte-se-ro |

7.5 | se |

| wirelessman | wirelessm-a-n |

7.5 | a |

| granadini | granad-i-ni |

7.5 | i |

6.6 Linguistic Interpretation

Automated Insight: The language Italian shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.82x) |

| N-gram | 2-gram | Lowest perplexity (214) |

| Markov | Context-4 | Highest predictability (92.6%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

Generated by Wikilangs Pipeline · 2026-03-03 12:12:25