Arabic — Full Ablation Study & Research Report

Detailed evaluation of all model variants trained on Arabic Wikipedia data by Wikilangs.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

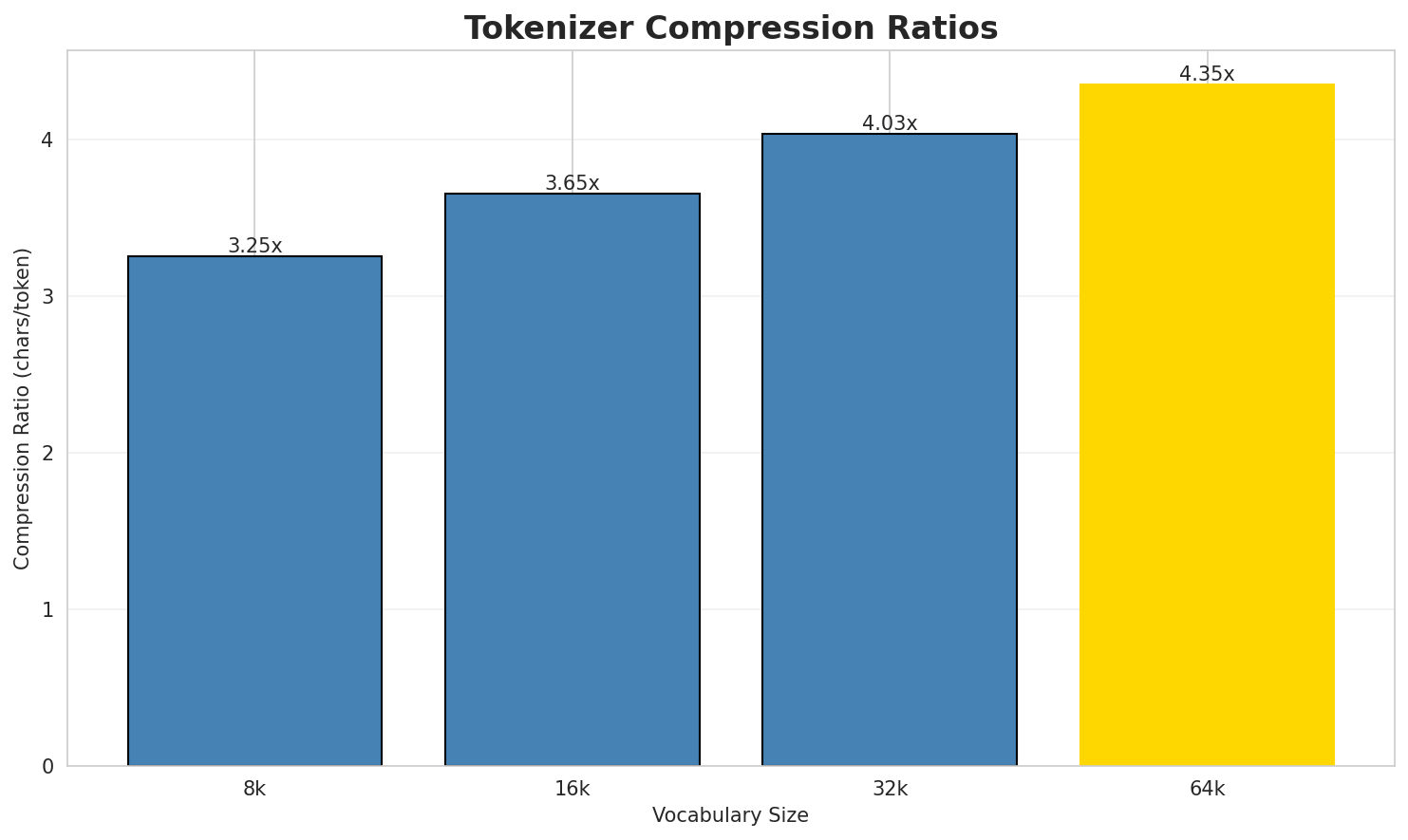

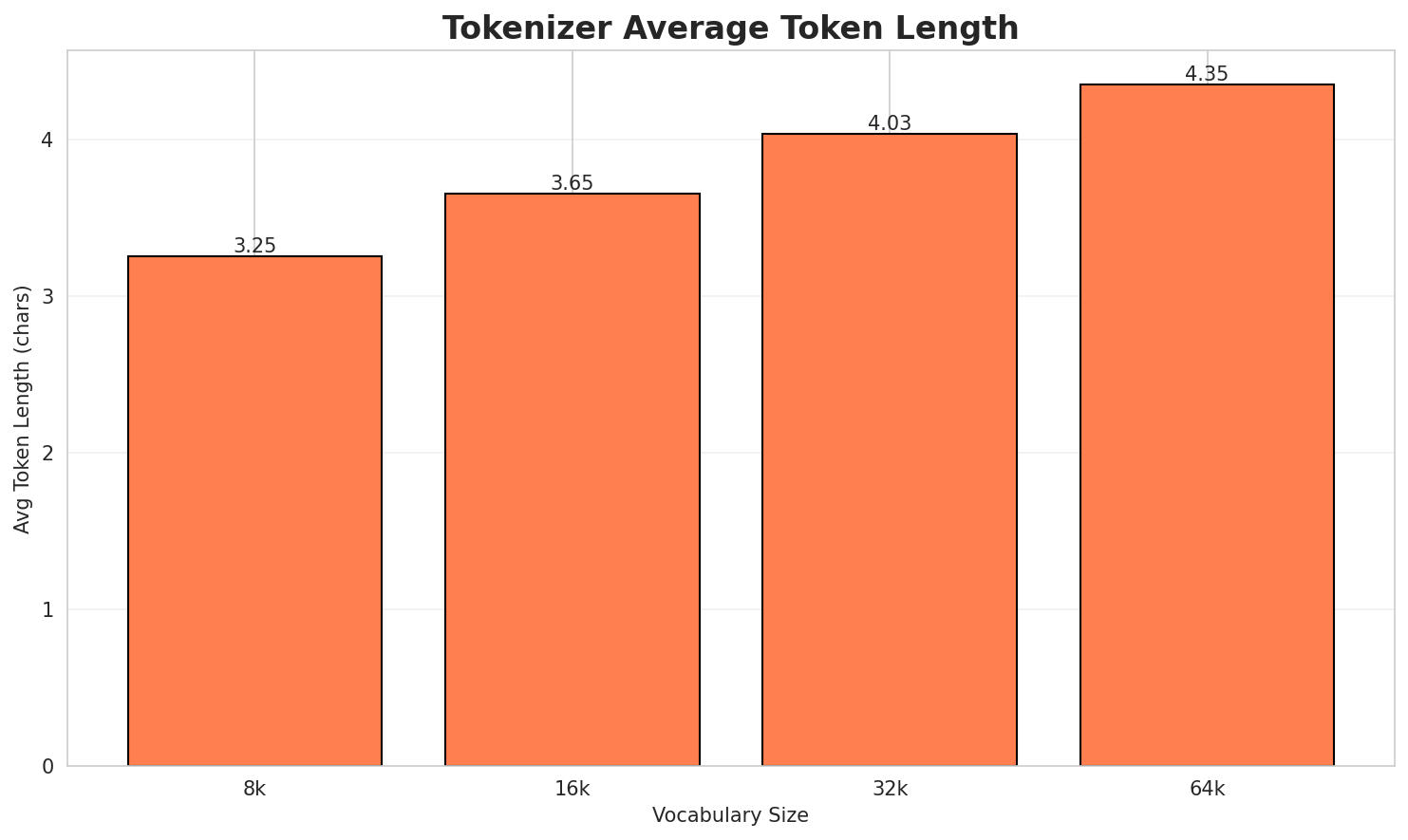

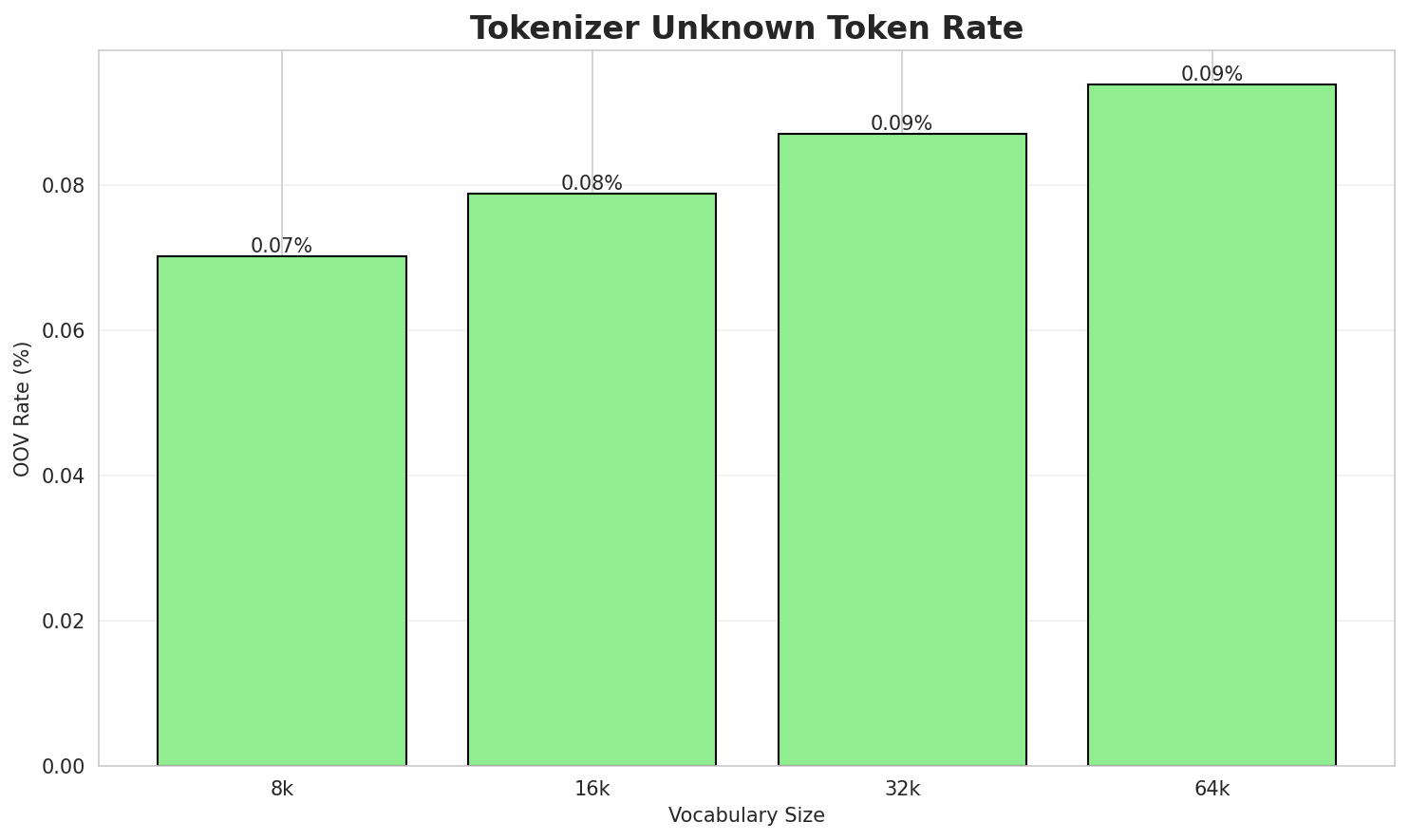

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.251x | 3.25 | 0.0702% | 5,509,050 |

| 16k | 3.654x | 3.65 | 0.0788% | 4,901,830 |

| 32k | 4.033x | 4.03 | 0.0870% | 4,440,712 |

| 64k | 4.347x 🏆 | 4.35 | 0.0938% | 4,120,770 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: استوديوهات أفلام والت ديزني أفلام والت ديزني منتجع والت ديزني العالمي ديزني لاند...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁است ودي وه ات ▁أفلام ▁والت ▁دي ز ني ▁أفلام ... (+22 more) |

32 |

| 16k | ▁است ودي وهات ▁أفلام ▁والت ▁ديزني ▁أفلام ▁والت ▁ديزني ▁منت ... (+10 more) |

20 |

| 32k | ▁استوديوهات ▁أفلام ▁والت ▁ديزني ▁أفلام ▁والت ▁ديزني ▁منتجع ▁والت ▁ديزني ... (+7 more) |

17 |

| 64k | ▁استوديوهات ▁أفلام ▁والت ▁ديزني ▁أفلام ▁والت ▁ديزني ▁منتجع ▁والت ▁ديزني ... (+7 more) |

17 |

Sample 2: باسكال قد تعني: الباسكال، وحدة قياس الضغط لغة باسكال، لغة برمجة الفيلسوف باسكال،...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁با سك ال ▁قد ▁تعني : ▁البا سك ال ، ... (+29 more) |

39 |

| 16k | ▁باسكال ▁قد ▁تعني : ▁الباسك ال ، ▁وحدة ▁قياس ▁الضغط ... (+18 more) |

28 |

| 32k | ▁باسكال ▁قد ▁تعني : ▁الباسك ال ، ▁وحدة ▁قياس ▁الضغط ... (+15 more) |

25 |

| 64k | ▁باسكال ▁قد ▁تعني : ▁الباسك ال ، ▁وحدة ▁قياس ▁الضغط ... (+15 more) |

25 |

Sample 3: جمهورية الكونغو الديمقراطية، زائير سابقًا، عاصمتها كينشاسا. جمهورية الكونغو، عاص...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁جمهورية ▁الكون غو ▁الديمقراطية ، ▁ز ائ ير ▁سابق ًا ... (+21 more) |

31 |

| 16k | ▁جمهورية ▁الكونغو ▁الديمقراطية ، ▁ز ائ ير ▁سابقًا ، ▁عاصمتها ... (+16 more) |

26 |

| 32k | ▁جمهورية ▁الكونغو ▁الديمقراطية ، ▁زائ ير ▁سابقًا ، ▁عاصمتها ▁كينشاسا ... (+12 more) |

22 |

| 64k | ▁جمهورية ▁الكونغو ▁الديمقراطية ، ▁زائير ▁سابقًا ، ▁عاصمتها ▁كينشاسا . ... (+10 more) |

20 |

Key Findings

- Best Compression: 64k achieves 4.347x compression

- Lowest UNK Rate: 8k with 0.0702% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

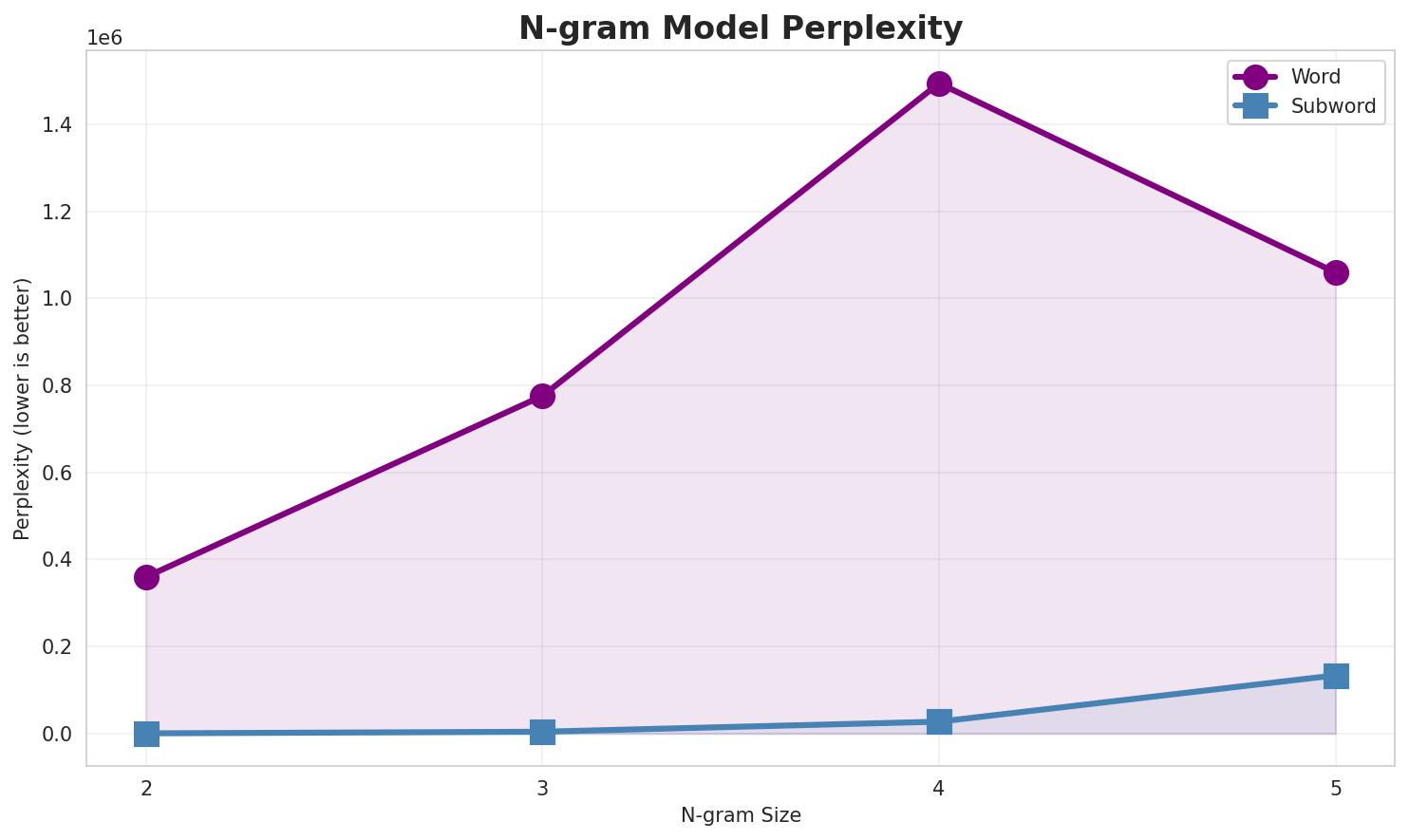

2. N-gram Model Evaluation

Results

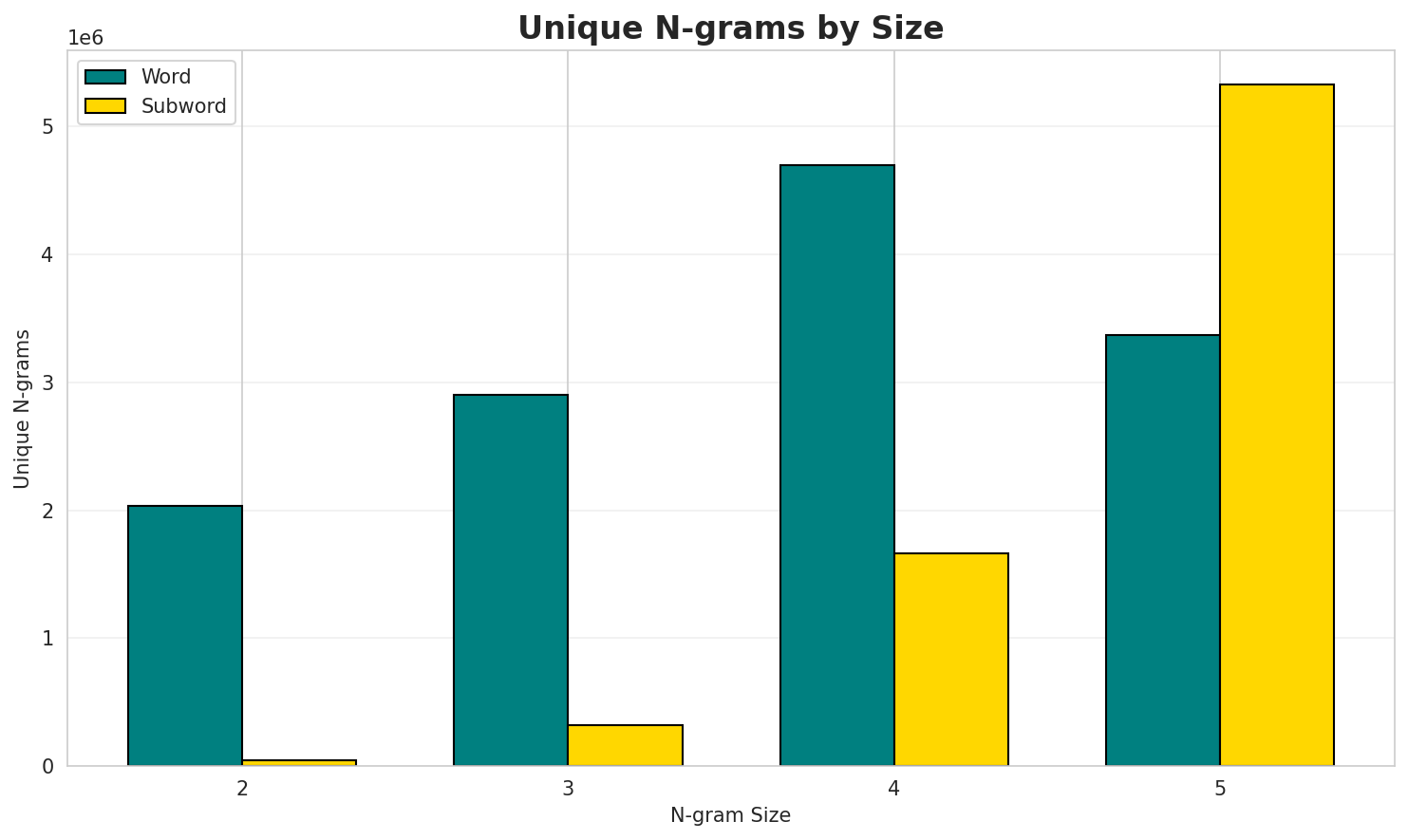

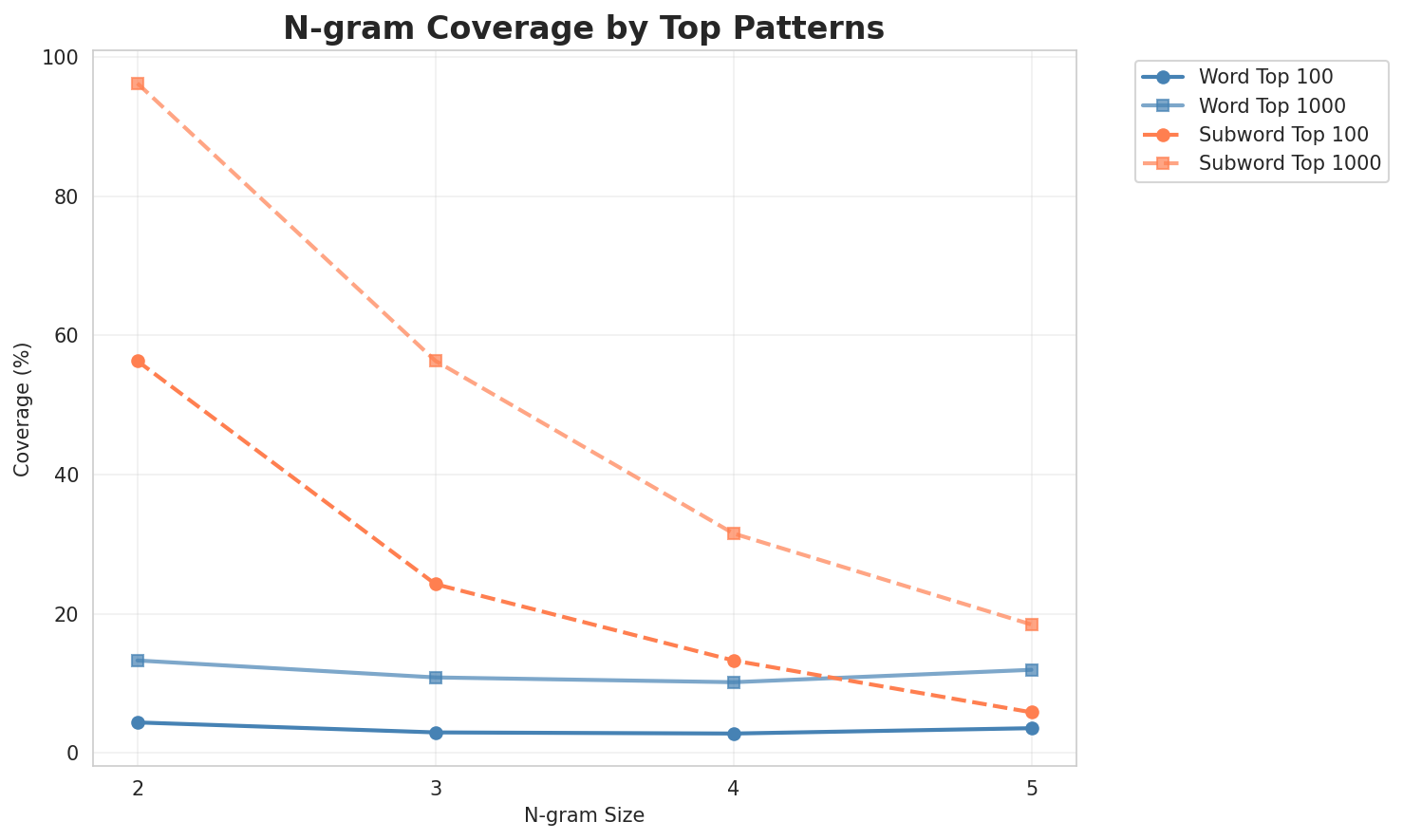

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 359,826 | 18.46 | 2,030,200 | 4.4% | 13.3% |

| 2-gram | Subword | 426 🏆 | 8.73 | 44,225 | 56.3% | 96.2% |

| 3-gram | Word | 775,988 | 19.57 | 2,900,317 | 3.0% | 10.9% |

| 3-gram | Subword | 4,163 | 12.02 | 321,654 | 24.3% | 56.3% |

| 4-gram | Word | 1,494,234 | 20.51 | 4,693,107 | 2.8% | 10.2% |

| 4-gram | Subword | 27,277 | 14.74 | 1,666,030 | 13.3% | 31.5% |

| 5-gram | Word | 1,059,510 | 20.01 | 3,368,028 | 3.6% | 11.9% |

| 5-gram | Subword | 133,736 | 17.03 | 5,324,551 | 5.8% | 18.5% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | في عام |

137,432 |

| 2 | في القرن |

92,611 |

| 3 | كرة قدم |

88,053 |

| 4 | العديد من |

65,695 |

| 5 | الولايات المتحدة |

63,417 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | في القرن 20 |

27,502 |

| 2 | في الولايات المتحدة |

25,188 |

| 3 | على الرغم من |

25,111 |

| 4 | في القرن 21 |

20,515 |

| 5 | بما في ذلك |

18,931 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | كرة قدم مغتربون في |

15,717 |

| 2 | تحت سن الثامنة عشر |

13,585 |

| 3 | على الرغم من أن |

8,756 |

| 4 | في الألعاب الأولمبية الصيفية |

5,980 |

| 5 | عام بلغ عدد سكان |

5,886 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | تعداد عام بلغ عدد سكان |

5,588 |

| 2 | بحسب تعداد عام وبلغ عدد |

5,569 |

| 3 | تعداد عام وبلغ عدد الأسر |

5,569 |

| 4 | نسمة بحسب تعداد عام وبلغ |

5,566 |

| 5 | في الفئة العمرية ما بين |

5,561 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ا ل |

27,516,669 |

| 2 | _ ا |

23,616,110 |

| 3 | ة _ |

13,152,069 |

| 4 | ن _ |

9,255,735 |

| 5 | ي _ |

9,009,959 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ ا ل |

22,248,047 |

| 2 | ا ل م |

4,149,844 |

| 3 | ي ة _ |

4,126,642 |

| 4 | _ ف ي |

4,065,816 |

| 5 | ف ي _ |

3,976,227 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ ف ي _ |

3,688,677 |

| 2 | ة _ ا ل |

3,625,657 |

| 3 | _ ا ل م |

3,573,633 |

| 4 | ن _ ا ل |

2,468,103 |

| 5 | _ م ن _ |

2,362,149 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ف ي _ ا ل |

1,266,206 |

| 2 | _ ف ي _ ا |

1,245,053 |

| 3 | ا ت _ ا ل |

1,085,180 |

| 4 | _ ع ل ى _ |

1,078,435 |

| 5 | ي ة _ ا ل |

1,036,752 |

Key Findings

- Best Perplexity: 2-gram (subword) with 426

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~18% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

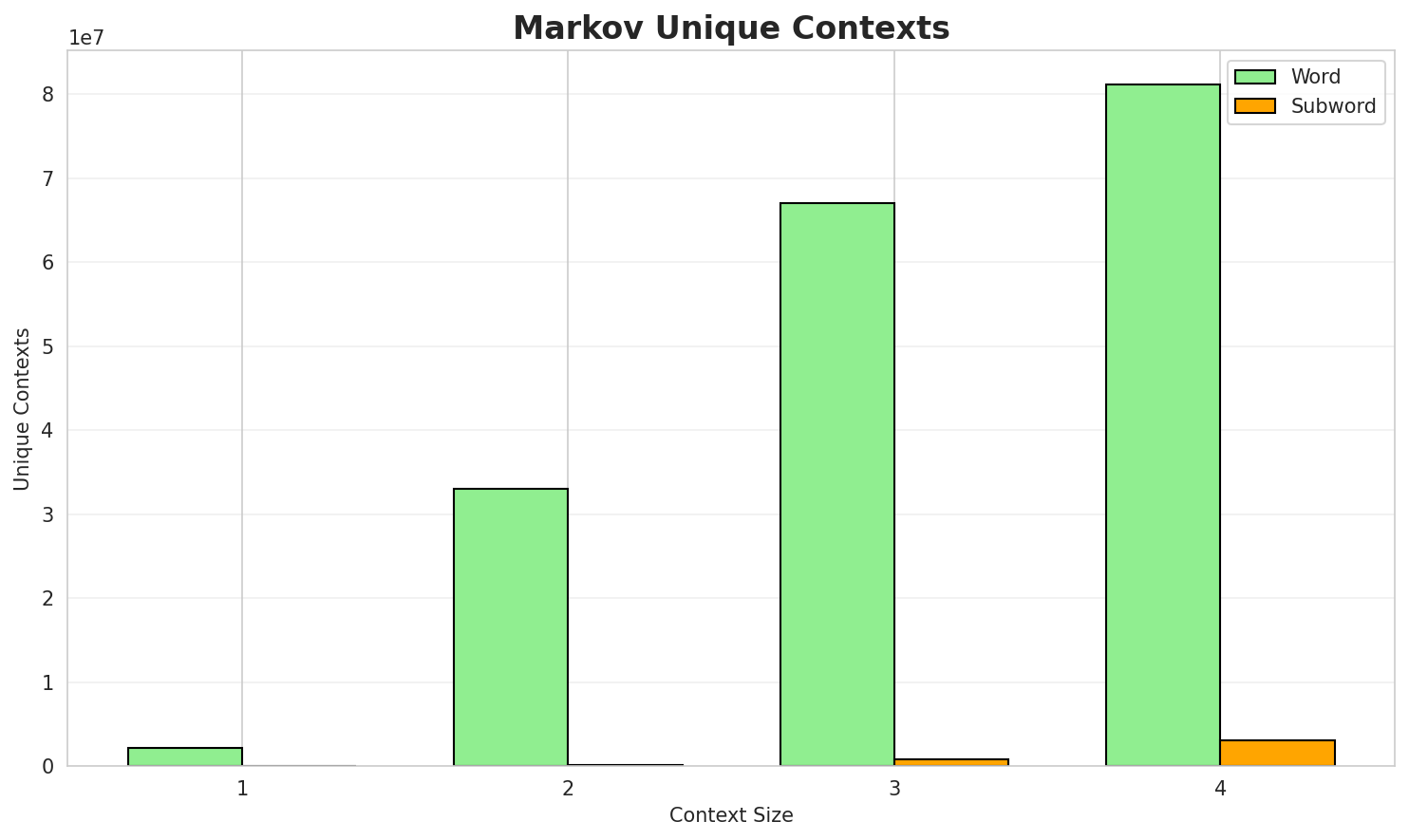

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 1.0468 | 2.066 | 15.08 | 2,190,668 | 0.0% |

| 1 | Subword | 1.2063 | 2.307 | 11.28 | 11,477 | 0.0% |

| 2 | Word | 0.3256 | 1.253 | 2.03 | 33,010,787 | 67.4% |

| 2 | Subword | 0.8269 | 1.774 | 5.80 | 129,485 | 17.3% |

| 3 | Word | 0.1052 | 1.076 | 1.21 | 67,054,969 | 89.5% |

| 3 | Subword | 0.7049 | 1.630 | 4.15 | 751,177 | 29.5% |

| 4 | Word | 0.0350 🏆 | 1.025 | 1.06 | 81,123,579 | 96.5% |

| 4 | Subword | 0.6481 | 1.567 | 3.38 | 3,113,652 | 35.2% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

في الألعاب الآسيوية خاض جهادًا فالأقدر قتالًا شديدًا تولَّى من حيث كانوا في المناهج العلاجية فيمن حيث منعت استخدام مصطلح من الخلايا battery of america bureau of the baskervilles العديد منعلى التلال وهي عضو النادي مبارياته الدولية بعد فوز فرنسا بيافرا التي عززت المظهر الخارجي للمبنى

Context Size 2:

في عام وقد انتقل بعض أفراد فرقته إلى فرقة المسرح الكويتي مسرح الرواد في هذا المجال غونارفي القرن 20 يابانيون في القرن 20 ذكور في سينيما ماراثية من دلهي النحات الرئيسي والمسؤول الرئيسيكرة قدم مغتربون في الولايات المتحدة وبريطانيا العظمى والهجينة على مركبة فضائية مأهولة في منطقة كوم ا...

Context Size 3:

في القرن 20 أمريكيون في القرن 21 هـ في القاهرة 923 هـ في القاهرة بالعربية في القرن 7على الرغم من محدودية علمهم ومستواهما الثقافي إلا أنهما كانا تابعين لأمير بلدة فيدين البلغاري ميخائيل...في الولايات المتحدة تصغير يسار ترجمة لاتينية عمرها خمس مائة عام لكتاب القانون في الطب لابن سينا وقال

Context Size 4:

كرة قدم مغتربون في فرنسا كيداه منتخب ماليزيا لكرة القدم روابط خارجية مراجع رجال ناميبيون في القرن 21...تحت سن الثامنة عشر ونسبة 18 3 في الخامسة والستين من العمر وما فوق تعداد عام بلغ عدد سكانعلى الرغم من أن الاكتشافات الأثرية لا تدعم هذه النظرية حيث أن تسمية الألوان الأساسية طبقا للتطور الت...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_التفيهالرزين_ولاقاتية_إلوعتحروبل_ب_ي_الجة_الجة_

Context Size 2:

البية_عصرية_على_أ_الزهربية._إره_مقة_التعلى_المية_ال

Context Size 3:

_البحر_من_أصبحت_حرالمصر_السنّة_-_فقد_ية_في_wirtugust_ha

Context Size 4:

_في_جنوبيَّة_من_ناثـرة_الممثلين_على_نفسه_المسيحيون_فلوريدا.

Key Findings

- Best Predictability: Context-4 (word) with 96.5% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (3,113,652 contexts)

- Recommendation: Context-3 or Context-4 for text generation

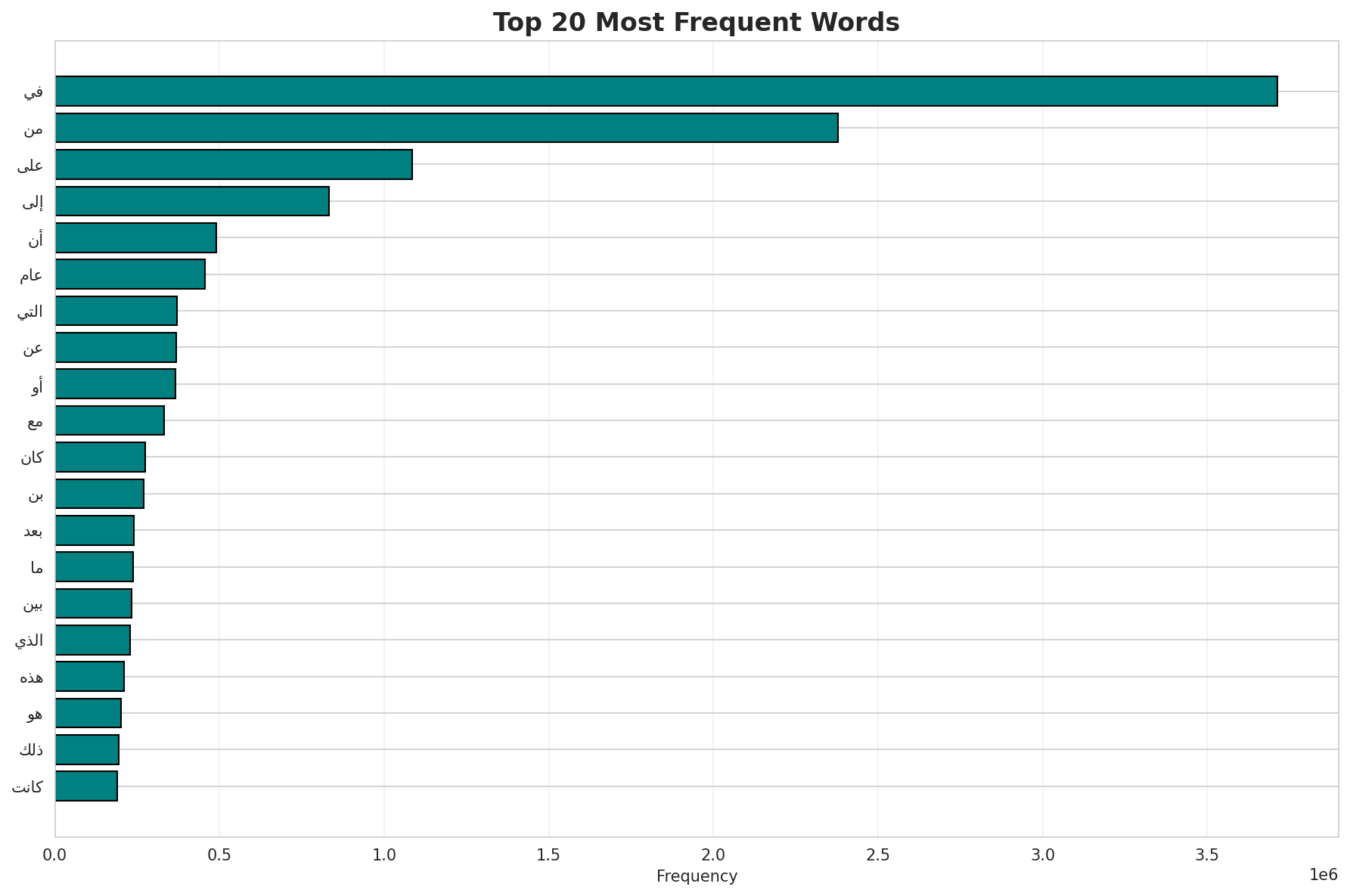

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 986,324 |

| Total Tokens | 94,902,130 |

| Mean Frequency | 96.22 |

| Median Frequency | 4 |

| Frequency Std Dev | 4980.31 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | في | 3,714,132 |

| 2 | من | 2,378,870 |

| 3 | على | 1,085,920 |

| 4 | إلى | 833,112 |

| 5 | أن | 489,978 |

| 6 | عام | 455,946 |

| 7 | التي | 369,985 |

| 8 | عن | 368,235 |

| 9 | أو | 366,818 |

| 10 | مع | 331,151 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | وساريكات | 2 |

| 2 | نهايةالمدةفترة | 2 |

| 3 | valachi | 2 |

| 4 | فالمختصون | 2 |

| 5 | المتأسفين | 2 |

| 6 | والمنشغلين | 2 |

| 7 | انحسبت | 2 |

| 8 | غيوان | 2 |

| 9 | moji | 2 |

| 10 | إيمجوي | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 0.9151 |

| R² (Goodness of Fit) | 0.992048 |

| Adherence Quality | excellent |

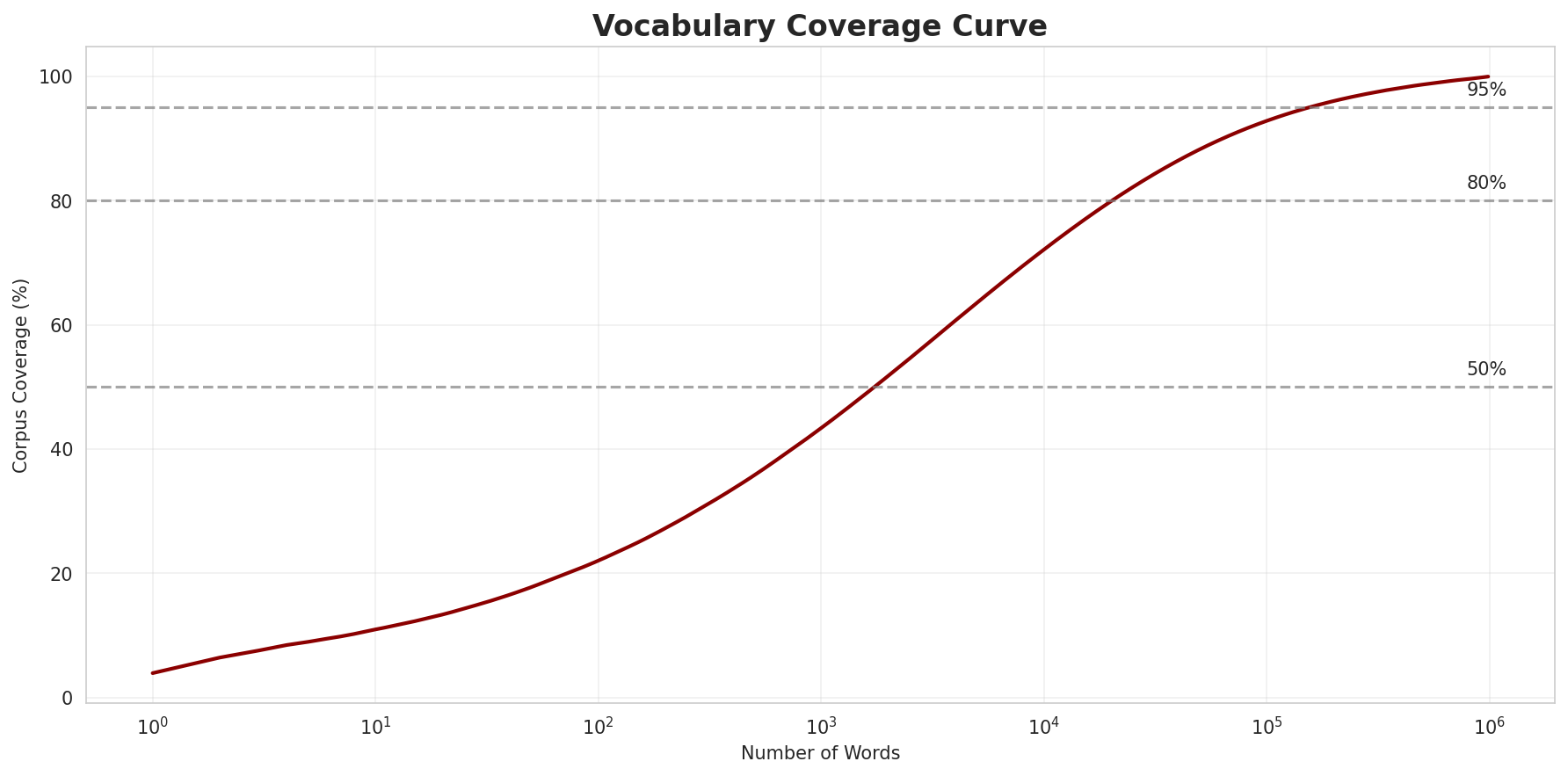

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 22.0% |

| Top 1,000 | 43.4% |

| Top 5,000 | 63.5% |

| Top 10,000 | 72.1% |

Key Findings

- Zipf Compliance: R²=0.9920 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 22.0% of corpus

- Long Tail: 976,324 words needed for remaining 27.9% coverage

5. Word Embeddings Evaluation

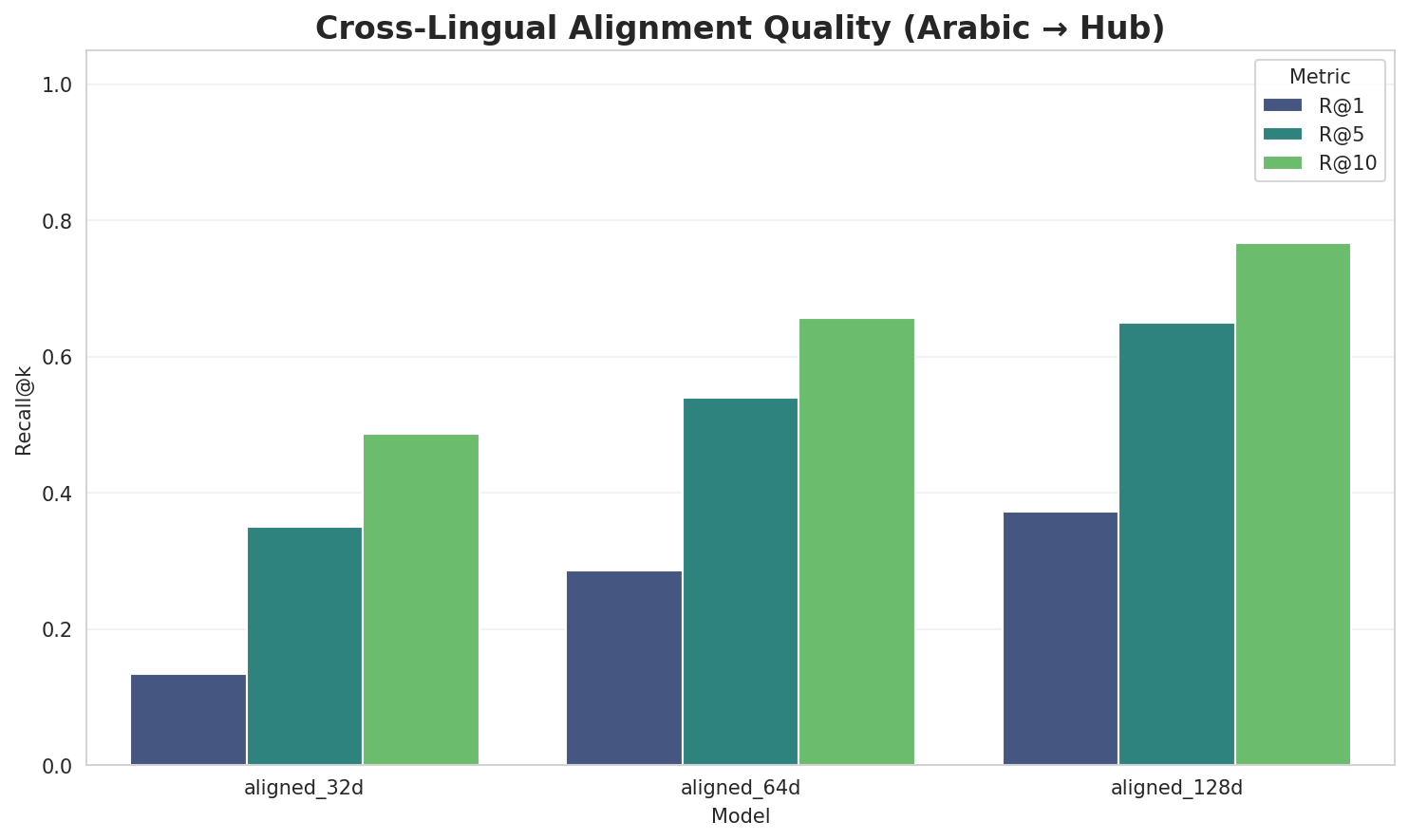

5.1 Cross-Lingual Alignment

5.2 Model Comparison

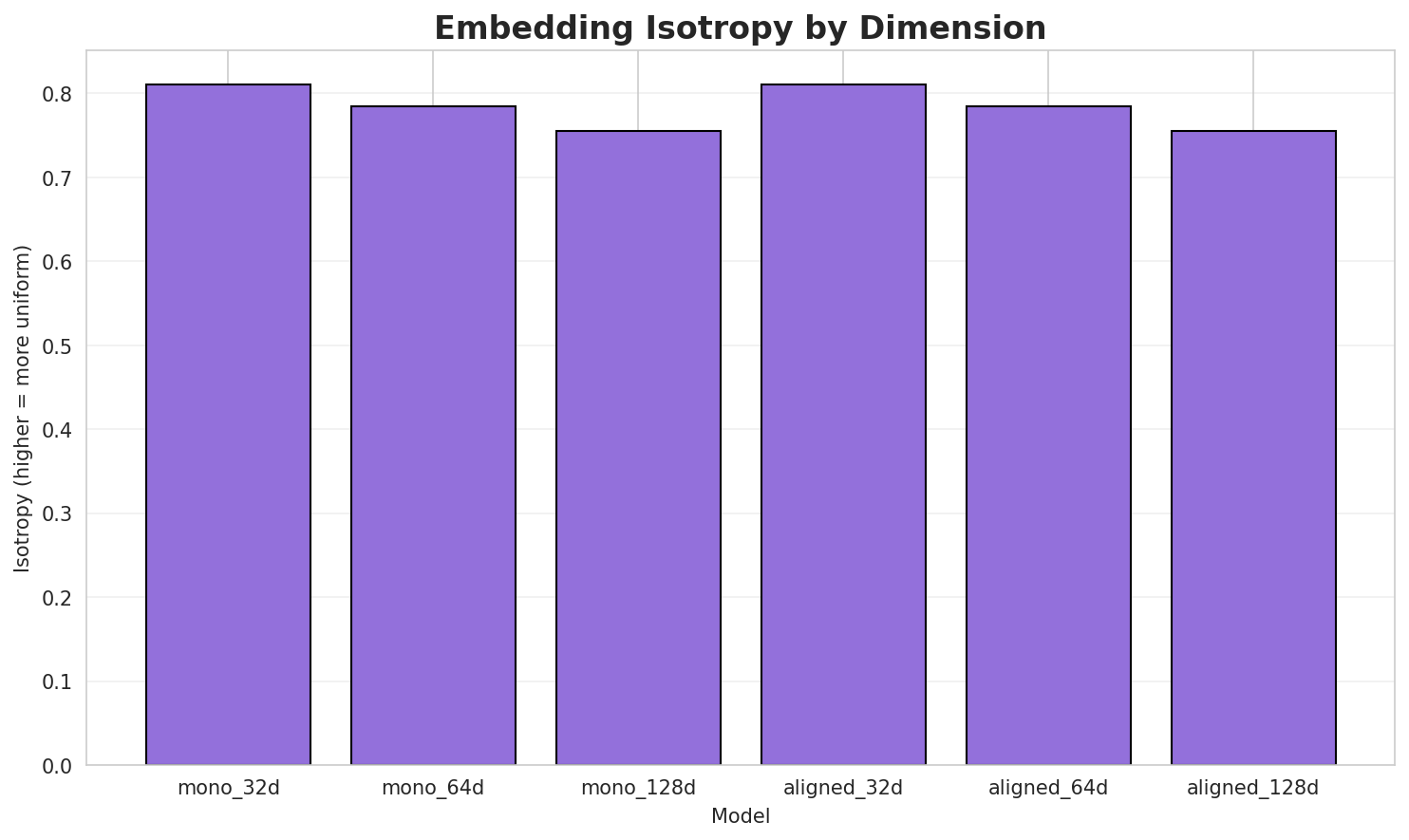

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.8111 | 0.3617 | N/A | N/A |

| mono_64d | 64 | 0.7841 | 0.2928 | N/A | N/A |

| mono_128d | 128 | 0.7556 | 0.2345 | N/A | N/A |

| aligned_32d | 32 | 0.8111 🏆 | 0.3646 | 0.1340 | 0.4860 |

| aligned_64d | 64 | 0.7841 | 0.2939 | 0.2860 | 0.6560 |

| aligned_128d | 128 | 0.7556 | 0.2339 | 0.3720 | 0.7660 |

Key Findings

- Best Isotropy: aligned_32d with 0.8111 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2969. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 37.2% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | -0.353 | Low formulaic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-ال |

الماكر, الويجرية, العرقيه |

-وال |

والمسكيت, والمأذون, والرسو |

-و |

وَصِيف, وخلافاً, وبالكيفية |

-الم |

الماكر, المحيطان, المتوسِّط |

-بال |

بالمدين, بالجماع, بالتأثر |

-ب |

بِالنيابة, بالمدين, بقاءة |

-ل |

للتمتع, لجرح, لقزم |

-م |

مصصم, معاملات, مناظرا |

Productive Suffixes

| Suffix | Examples |

|---|---|

-ا |

تعرضها, مناظرا, فقابلا |

-ن |

بالمدين, والمأذون, المحيطان |

-ة |

بِالنيابة, الويجرية, وبالكيفية |

-ت |

معاملات, والمسكيت, ومُؤسسات |

-ي |

اخصابي, الثانيةفي, بيبيمي |

-ين |

بالمدين, الغلامين, للهيروجين |

-ات |

معاملات, ومُؤسسات, الألقابسنوات |

-م |

تسكينهم, مصصم, لقزم |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

ستخد |

2.56x | 420 contexts | ستخدم, يستخد, تستخد |

التع |

1.70x | 417 contexts | التعس, التعب, التعمد |

مجمو |

2.12x | 120 contexts | مجموة, مجمود, مجموع |

استخ |

1.97x | 149 contexts | استخف, استخم, استخد |

تحدة |

2.82x | 26 contexts | متحدة, ومتحدة, لمتحدة |

المق |

1.38x | 607 contexts | المقد, المقل, المقص |

ارات |

1.31x | 739 contexts | كارات, تارات, دارات |

لمنا |

1.38x | 514 contexts | ظلمنا, حلمنا, لمنار |

المج |

1.39x | 473 contexts | المجل, المجد, المجن |

امعة |

2.14x | 53 contexts | قامعة, دامعة, سامعة |

لعال |

1.76x | 115 contexts | العال, لعالم, لعالي |

الحا |

1.34x | 492 contexts | الحاد, الحاق, الحاف |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-ال |

-ة |

297 words | المحميّة, العقاقيرية |

-ال |

-ن |

179 words | القطبيتان, المحتشدين |

-ال |

-ي |

167 words | السيجومي, الازدي |

-و |

-ا |

138 words | والكوسا, ومجتهدًا |

-ال |

-ية |

129 words | العقاقيرية, المُغطية |

-ال |

-ت |

113 words | الأستكشافات, المتغيِّرات |

-ال |

-ين |

98 words | المحتشدين, المتخاذلين |

-ال |

-ات |

97 words | الأستكشافات, المتغيِّرات |

-وال |

-ة |

72 words | والحرورية, والضرورية |

-م |

-ا |

64 words | مُؤديًا, مأمونًا |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| الهوسيتية | الهوسي-ت-ية |

7.5 | ت |

| وتحويلتين | وتحويل-ت-ين |

7.5 | ت |

| القراخانيين | القراخان-ي-ين |

7.5 | ي |

| فیروزآباد | فیروزآب-ا-د |

7.5 | ا |

| الارسالية | الا-رسال-ية |

6.0 | رسال |

| والمتعلمة | وال-متعلم-ة |

6.0 | متعلم |

| والكيكونغو | و-ال-كيكونغو |

6.0 | كيكونغو |

| والسويسريين | و-ال-سويسريين |

6.0 | سويسريين |

| والنازحون | و-ال-نازحون |

6.0 | نازحون |

| القترائية | الق-ترائ-ية |

6.0 | ترائ |

| للنوميديين | لل-نوميدي-ين |

6.0 | نوميدي |

| والفاندال | و-ال-فاندال |

6.0 | فاندال |

| وبالإجراءات | و-بال-إجراءات |

6.0 | إجراءات |

| بالهليكوبتر | ب-ال-هليكوبتر |

6.0 | هليكوبتر |

| والاستقلابية | و-ال-استقلابية |

6.0 | استقلابية |

6.6 Linguistic Interpretation

Automated Insight: The language Arabic shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.35x) |

| N-gram | 2-gram | Lowest perplexity (426) |

| Markov | Context-4 | Highest predictability (96.5%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

Generated by Wikilangs Pipeline · 2026-03-04 14:57:25