ltx2.3-gguf

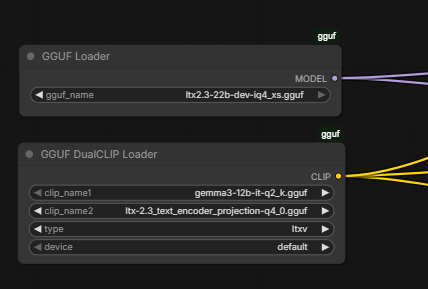

see picture above for setup (inside sub-graph)

- pull

ltx2.3-22b-dev-iq4_xs.gguftodiffusion_models - pull

ltx2.3_text_encoder_projection-q4_0.ggufand gemma3-12b-it totext_encoders

btw, replace the ltx2.3-22b-dev or -distill with this universal checkpoint clip (see below)

- pull

ltx2.3-22b-checkpoint_fp8_e4m3fn.safetensorstocheckpoints

note: you don't need any extra node for running ltx2.3 under this setting; probably run it with beginner level gpu without problem, just wait slightly longer; you might need protobuf for rebuilding the tokenizer if you opt gemma3 gguf, simply install it with:

.\python_embeded\python.exe -s -m pip install protobuf

- Downloads last month

- 892

Hardware compatibility

Log In to add your hardware

4-bit

8-bit

Inference Providers NEW

This model isn't deployed by any Inference Provider. 🙋 Ask for provider support

Model tree for gguf-org/ltx2.3-gguf

Base model

Lightricks/LTX-2.3